by Joche Ojeda | Jan 21, 2025 | Uncategorized

During my recent AI research break, I found myself taking a walk down memory lane, reflecting on my early career in data analysis and ETL operations. This journey brought me back to an interesting aspect of software development that has evolved significantly over the years: the management of shared libraries.

The VB6 Era: COM Components and DLL Hell

My journey began with Visual Basic 6, where shared libraries were managed through COM components. The concept seemed straightforward: store shared DLLs in the Windows System directory (typically C:\Windows\System32) and register them using regsvr32.exe. The Windows Registry kept track of these components under HKEY_CLASSES_ROOT.

However, this system had a significant flaw that we now famously know as “DLL Hell.” Let me share a practical example: Imagine you have two systems, A and B, both using Crystal Reports 7. If you uninstall either system, the other would break because the shared DLL would be removed. Version control was primarily managed by location, making it a precarious system at best.

Enter .NET Framework: The GAC Revolution

When Microsoft introduced the .NET Framework, it brought a sophisticated solution to these problems: the Global Assembly Cache (GAC). Located at C:\Windows\Microsoft.NET\assembly\ (for .NET 4.0 and later), the GAC represented a significant improvement in shared library management.

The most revolutionary aspect was the introduction of assembly identity. Instead of relying solely on filenames and locations, each assembly now had a unique identity consisting of:

- Simple name (e.g., “MyCompany.MyLibrary”)

- Version number (e.g., “1.0.0.0”)

- Culture information

- Public key token

A typical assembly full name would look like this:

MyCompany.MyLibrary, Version=1.0.0.0, Culture=neutral, PublicKeyToken=b77a5c561934e089

This robust identification system meant that multiple versions of the same assembly could coexist peacefully, solving many of the versioning nightmares that plagued the VB6 era.

The Modern Approach: Private Dependencies

Fast forward to 2025, and we’re living in what I call the “brave new world” of .NET for multi-operative systems. The landscape has changed dramatically. Storage is no longer the premium resource it once was, and the trend has shifted away from shared libraries toward application-local deployment.

Modern applications often ship with their own private version of the .NET runtime and dependencies. This approach eliminates the risks associated with shared components and gives applications complete control over their runtime environment.

Reflection on Technology Evolution

While researching Blazor’s future and seeing discussions about Microsoft’s technology choices, I’m reminded that technology evolution is a constant journey. Organizations move slowly in production environments, and that’s often for good reason. The shift from COM components to GAC to private dependencies wasn’t just a technical evolution – it was a response to real-world problems and changing resources.

This journey from VB6 to modern .NET reveals an interesting pattern: sometimes the best solution isn’t sharing resources but giving each application its own isolated environment. It’s fascinating how the decreasing cost of storage and increasing need for reliability has transformed our approach to dependency management.

As I return to my AI research, this trip down memory lane serves as a reminder that while technology constantly evolves, understanding its history helps us appreciate the solutions we have today and better prepare for the challenges of tomorrow.

by Joche Ojeda | Jan 20, 2025 | ADO, ADO.NET, Database, dotnet

When I first encountered the challenge of migrating hundreds of Visual Basic 6 reports to .NET, I never imagined it would lead me down a path of discovering specialized data analytics tools. Today, I want to share my experience with ADOMD.NET and how it could have transformed our reporting challenges, even though we couldn’t implement it due to our database constraints.

The Challenge: The Sales Gap Report

The story begins with a seemingly simple report called “Sales Gap.” Its purpose was critical: identify periods when regular customers stopped purchasing specific items. For instance, if a customer typically bought 10 units monthly from January to May, then suddenly stopped in June and July, sales representatives needed to understand why.

This report required complex queries across multiple transactional tables:

- Invoicing

- Sales

- Returns

- Debits

- Credits

Initially, the report took about a minute to run. As our data grew, so did the execution time—eventually reaching an unbearable 15 minutes. We were stuck with a requirement to use real-time transactional data, making traditional optimization techniques like data warehousing off-limits.

Enter ADOMD.NET: A Specialized Solution

ADOMD.NET (ActiveX Data Objects Multidimensional .NET) emerged as a potential solution. Here’s why it caught my attention:

Key Features:

-

Multidimensional Analysis

Unlike traditional SQL queries, ADOMD.NET uses MDX (Multidimensional Expressions), specifically designed for analytical queries. Here’s a basic example:

string mdxQuery = @"

SELECT

{[Measures].[Sales Amount]} ON COLUMNS,

{[Date].[Calendar Year].MEMBERS} ON ROWS

FROM [Sales Cube]

WHERE [Product].[Category].[Electronics]";

-

Performance Optimization

ADOMD.NET is built for analytical workloads, offering better performance for complex calculations and aggregations. It achieves this through:

- Specialized data structures for multidimensional analysis

- Efficient handling of hierarchical data

- Built-in support for complex calculations

-

Advanced Analytics Capabilities

The tool supports sophisticated analysis patterns like:

string mdxQuery = @"

WITH MEMBER [Measures].[GrowthVsPreviousYear] AS

([Measures].[Sales Amount] -

([Measures].[Sales Amount], [Date].[Calendar Year].PREVMEMBER)

)/([Measures].[Sales Amount], [Date].[Calendar Year].PREVMEMBER)

SELECT

{[Measures].[Sales Amount], [Measures].[GrowthVsPreviousYear]}

ON COLUMNS...";

Lessons Learned

While we couldn’t implement ADOMD.NET due to our use of Pervasive Database instead of SQL Server, the investigation taught me valuable lessons about report optimization:

- The importance of choosing the right tools for analytical workloads

- The limitations of running complex analytics on transactional databases

- The value of specialized query languages for different types of data analysis

Modern Applications

Today, ADOMD.NET continues to be relevant for organizations using:

- SQL Server Analysis Services (SSAS)

- Azure Analysis Services

- Power BI Premium datasets

If I were facing the same challenge today with SQL Server, ADOMD.NET would be my go-to solution for:

- Complex sales analysis

- Customer behavior tracking

- Performance-intensive analytical reports

Conclusion

While our specific situation with Pervasive Database prevented us from using ADOMD.NET, it remains a powerful tool for organizations using Microsoft’s analytics stack. The experience taught me that sometimes the solution isn’t about optimizing existing queries, but about choosing the right specialized tools for analytical workloads.

Remember: Just because you can run analytics on your transactional database doesn’t mean you should. Tools like ADOMD.NET exist for a reason, and understanding when to use them can save countless hours of optimization work and provide better results for your users.

by Joche Ojeda | Jan 17, 2025 | DevExpress, dotnet

My mom used to say that fashion is cyclical – whatever you do will eventually come back around. I’ve come to realize the same principle applies to technology. Many technologies have come and gone, only to resurface again in new forms.

Take Command Line Interface (CLI) commands, for example. For years, the industry pushed to move away from CLI towards graphical interfaces, promising a more user-friendly experience. Yet here we are in 2025, witnessing a remarkable return to CLI-based tools, especially in software development.

As a programmer, efficiency is key – particularly when dealing with repetitive tasks. This became evident when my business partner Javier and I decided to create our own application templates for Visual Studio. The process was challenging, mainly because Visual Studio’s template infrastructure isn’t well maintained. Documentation was sparse, and the whole process felt cryptic.

Our first major project was creating a template for Xamarin.Forms (now .NET MAUI), aiming to build a multi-target application template that could work across Android, iOS, and Windows. We relied heavily on James Montemagno’s excellent resources and videos to navigate this complex territory.

The task became significantly easier with the introduction of the new SDK-style projects. Compared to the older MSBuild project types, which were notoriously complex to template, the new format makes creating custom project templates much more straightforward.

In today’s development landscape, most application templates are distributed as NuGet packages, making them easier to share and implement. Interestingly, these packages are primarily designed for CLI use rather than Visual Studio’s graphical interface – a perfect example of technology coming full circle.

Following this trend, DevExpress has developed a new set of application templates that work cross-platform using the CLI. These templates leverage SkiaSharp for UI rendering, enabling true multi-IDE and multi-OS compatibility. While they’re not yet compatible with Apple Silicon, that support is likely coming in future updates.

The templates utilize CLI under the hood to generate new project structures. When you install these templates in Visual Studio Code or Visual Studio, they become available through both the CLI and the graphical interface, offering developers the best of both worlds.

Here is the official DevExpress blog post for the new application templates

https://www.devexpress.com/subscriptions/whats-new/#project-template-gallery-net8

Templates for Visual Studio

DevExpress Template Kit for Visual Studio – Visual Studio Marketplace

Templates for VS Code

DevExpress Template Kit for VS Code – Visual Studio Marketplace

If you want to see the list of the new installed DevExpress templates, you can use the following command on the terminal

dotnet new list dx

I’d love to hear your thoughts on this technological cycle. Which approach do you prefer for creating new projects – CLI or graphical interface? Let me know in the comments below!

by Joche Ojeda | Jan 15, 2025 | C#, dotnet, Emit, MetaProgramming, Reflection

Every programmer encounters that one technology that draws them into the darker arts of software development. For some, it’s metaprogramming; for others, it’s assembly hacking. For me, it was the mysterious world of runtime code generation through Emit in the early 2000s, during my adventures with XPO and the enigmatic Sage Accpac ERP.

The Quest Begins: A Tale of Documentation and Dark Arts

Back in the early 2000s, when the first version of XPO was released, I found myself working alongside my cousin Carlitos in our startup. Fresh from his stint as an ERP consultant in the United States, Carlitos brought with him deep knowledge of Sage Accpac, setting us on a path to provide integration services for this complex system.

Our daily bread and butter were custom reports – starting with Crystal Reports before graduating to DevExpress’s XtraReports and XtraPivotGrid. But we faced an interesting challenge: Accpac’s database was intentionally designed to resist reverse engineering, with flat tables devoid of constraints or relationships. All we had was their HTML documentation, a labyrinth of interconnected pages holding the secrets of their entity relationships.

Genesis: When Documentation Meets Dark Magic

This challenge birthed Project Genesis, my ambitious attempt to create an XPO class generator that could parse Accpac’s documentation. The first hurdle was parsing HTML – a quest that led me to CodePlex (yes, I’m dating myself here) and the discovery of HTMLAgilityPack, a remarkable tool that still serves developers today.

But the real dark magic emerged when I faced the challenge of generating classes dynamically. Buried in our library’s .NET books, I discovered the arcane art of Emit – a powerful technique for runtime assembly and class generation that would forever change my perspective on what’s possible in .NET.

Diving into the Abyss: Understanding Emit

At its core, Emit is like having a magical forge where you can craft code at runtime. Imagine being able to write code that writes more code – not just as text to be compiled later, but as actual, executable IL instructions that the CLR can run immediately.

AssemblyName assemblyName = new AssemblyName("DynamicAssembly");

AssemblyBuilder assemblyBuilder = AssemblyBuilder.DefineDynamicAssembly(

assemblyName,

AssemblyBuilderAccess.Run

);

This seemingly simple code opens a portal to one of .NET’s most powerful capabilities: dynamic assembly generation. It’s the beginning of a spell that allows you to craft types and methods from pure thought (and some carefully crafted IL instructions).

The Power and the Peril

Like all dark magic, Emit comes with its own dangers and responsibilities. When you’re generating IL directly, you’re dancing with the very fabric of .NET execution. One wrong move – one misplaced instruction – and your carefully crafted spell can backfire spectacularly.

The first rule of Emit Club is: don’t use Emit unless you absolutely have to. The second rule is: if you do use it, document everything meticulously. Your future self (and your team) will thank you.

Modern Alternatives and Evolution

Today, the .NET ecosystem offers alternatives like Source Generators that provide similar power with less risk. But understanding Emit remains valuable – it’s like knowing the fundamental laws of magic while using higher-level spells for daily work.

In my case, Project Genesis evolved beyond its original scope, teaching me crucial lessons about runtime code generation, performance optimization, and the delicate balance between power and maintainability.

Conclusion: The Magic Lives On

Twenty years later, Emit remains one of .NET’s most powerful and mysterious features. While modern development practices might steer us toward safer alternatives, understanding these fundamental building blocks of runtime code generation gives us deeper insight into the framework’s capabilities.

For those brave enough to venture into this realm, remember: with great power comes great responsibility – and the need for comprehensive unit tests. The dark magic of Emit might be seductive, but like all powerful tools, it demands respect and careful handling.

by Joche Ojeda | Jan 14, 2025 | C#, dotnet, MetaProgramming, Reflection

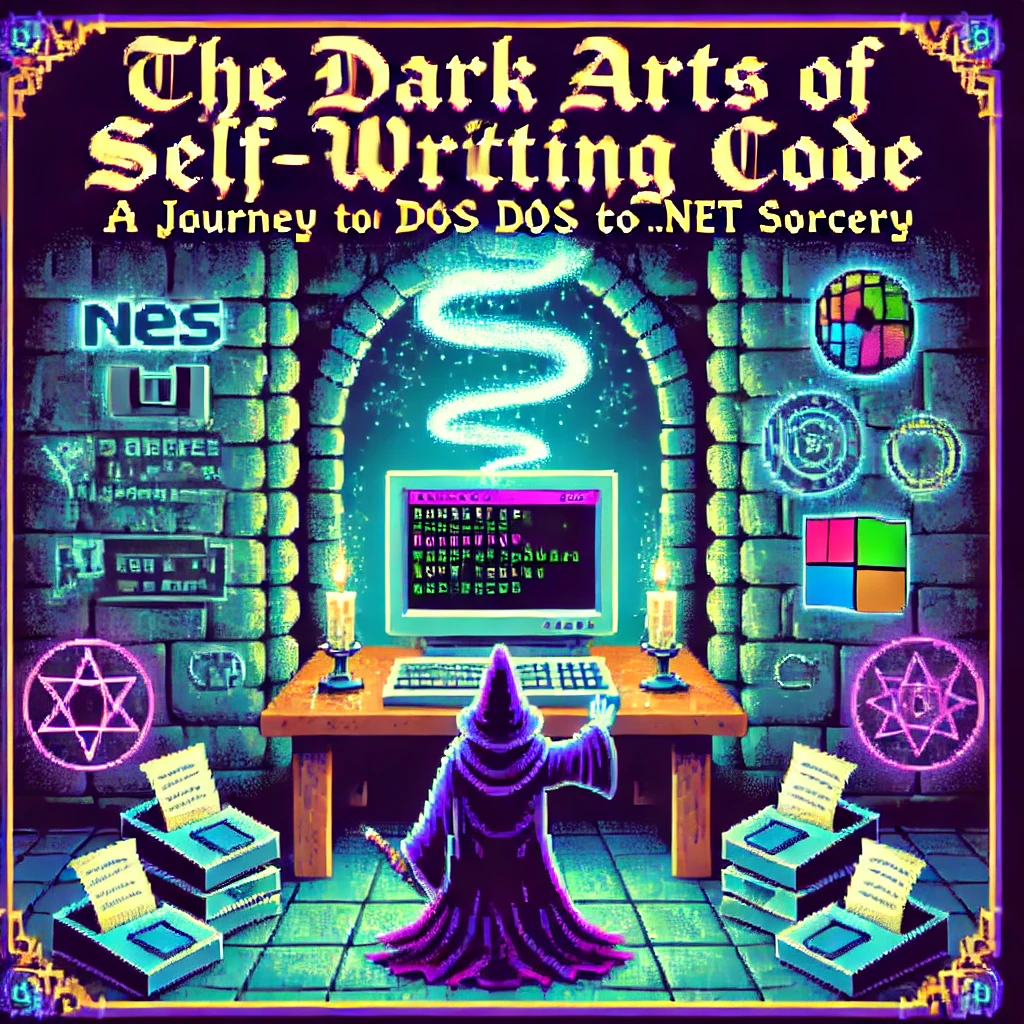

The Beginning of a Digital Sorcerer

Every master of the dark arts has an origin story, and mine begins in the ancient realm of MS-DOS 6.1. What started as simple experimentation with BAT files would eventually lead me down a path to discovering one of programming’s most powerful arts: metaprogramming.

I still remember the day my older brother Oscar introduced me to the mystical DIR command. He was three years ahead of me in school, already initiated into the computer classes that would begin in “tercer ciclo” (7th through 9th grade) in El Salvador. This simple command, capable of revealing the contents of directories, was my first spell in what would become a lifelong pursuit of programming magic.

My childhood hobbies – playing video games, guitar, and piano (a family tradition, given my father’s musical lineage) – faded into the background as I discovered the enchanting world of DOS commands. The discovery that files ending in .exe were executable spells and .com files were commands that accepted parameters opened up a new realm of possibilities.

Armed with EDIT.COM, a primitive but powerful text editor, I began experimenting with every file I could find. The real breakthrough came when I discovered AUTOEXEC.BAT, a mystical scroll that controlled the DOS startup ritual. This was my first encounter with automated script execution, though I didn’t know it at the time.

The Path of Many Languages

My journey through the programming arts led me through many schools of magic: Turbo Pascal, C++, Fox Pro (more of an application framework than a pure language), Delphi, VB6, VBA, VB.NET, and finally, my true calling: C#.

During my university years, I co-founded my first company with my cousin “Carlitos,” supported by my uncle Carlos Melgar, who had been like a father to me. While we had some coding experience, our ambition to create our own ERP system led us to expand our circle. This is where I met Abel, one of two programmers we recruited who were dating my cousins at the time. Abel, coming from a Delphi background, introduced me to a concept that would change my understanding of programming forever: reflection.

Understanding the Dark Arts of Metaprogramming

What Abel revealed to me that day was just the beginning of my journey into metaprogramming, a form of magic that allows code to examine and modify itself at runtime. In the .NET realm, this sorcery primarily manifests through reflection, a power that would have seemed impossible in my DOS days.

Let me share with you the secrets I’ve learned along this path:

The Power of Reflection: Your First Spell

// A basic spell of introspection

Type stringType = typeof(string);

MethodInfo[] methods = stringType.GetMethods();

foreach (var method in methods)

{

Console.WriteLine($"Discovered spell: {method.Name}");

}

This simple incantation allows your code to examine itself, revealing the methods hidden within any type. But this is just the beginning.

Conjuring Objects from the Void

As your powers grow, you’ll learn to create objects dynamically:

public class ObjectConjurer

{

public T SummonAndEnchant<T>(Dictionary<string, object> properties) where T : new()

{

T instance = new T();

Type type = typeof(T);

foreach (var property in properties)

{

PropertyInfo prop = type.GetProperty(property.Key);

if (prop != null && prop.CanWrite)

{

prop.SetValue(instance, property.Value);

}

}

return instance;

}

}

Advanced Rituals: Expression Trees

Expression<Func<int, bool>> ageCheck = age => age >= 18;

var parameter = Expression.Parameter(typeof(int), "age");

var constant = Expression.Constant(18, typeof(int));

var comparison = Expression.GreaterThanOrEqual(parameter, constant);

var lambda = Expression.Lambda<Func<int, bool>>(comparison, parameter);

The Price of Power: Security and Performance

Like any powerful magic, these arts come with risks and costs. Through my journey, I learned the importance of protective wards:

Guarding Against Dark Forces

// A protective ward for your reflective operations

[SecurityPermission(SecurityAction.Demand, ControlEvidence = true)]

public class SecretKeeper

{

private readonly string _arcaneSecret = "xyz";

public string RevealSecret(string authToken)

{

if (ValidateToken(authToken))

return _arcaneSecret;

throw new ForbiddenMagicException("Unauthorized attempt to access secrets");

}

}

The Cost of Power

| Ritual Type |

Energy Cost (ms) |

Mana Usage |

| Direct Cast |

1 |

Baseline |

| Reflection |

10-20 |

2x-3x |

| Cached Cast |

2-3 |

1.5x |

| Compiled |

1.2-1.5 |

1.2x |

To mitigate these costs, I learned to cache my spells:

public class SpellCache

{

private static readonly ConcurrentDictionary<string, MethodInfo> SpellBook

= new ConcurrentDictionary<string, MethodInfo>();

public static MethodInfo GetSpell(Type type, string spellName)

{

string key = $"{type.FullName}.{spellName}";

return SpellBook.GetOrAdd(key, _ => type.GetMethod(spellName));

}

}

Practical Applications in the Modern Age

Today, these dark arts power many of our most powerful frameworks:

- Entity Framework uses reflection for its magical object-relational mapping

- Dependency Injection containers use it to automatically wire up our applications

- Serialization libraries use it to transform objects into different forms

- Unit testing frameworks use it to create test doubles and verify behavior

Wisdom for the Aspiring Sorcerer

From my journey from DOS batch files to the heights of .NET metaprogramming, I’ve gathered these pieces of wisdom:

- Cache your incantations whenever possible

- Guard your secrets with proper wards

- Measure the cost of your rituals

- Use direct casting when available

- Document your dark arts thoroughly

Conclusion

Looking back at my journey from those first DOS commands to mastering the dark arts of metaprogramming, I’m reminded that every programmer’s path is unique. That young boy who first typed DIR in MS-DOS could never have imagined where that path would lead. Today, as I work with advanced concepts like reflection and metaprogramming in .NET, I’m reminded that our field is one of continuous learning and evolution.

The dark arts of metaprogramming may be powerful, but like any tool, their true value lies in knowing when and how to use them effectively. Remember, while the ability to make code write itself might seem like sorcery, the real magic lies in understanding the fundamentals and growing from them. Whether you’re starting with basic commands like I did or diving straight into advanced concepts, every step of the journey contributes to your growth as a developer.

And who knows? Maybe one day you’ll find yourself teaching these dark arts to the next generation of digital sorcerers.

by Joche Ojeda | Jan 13, 2025 | Uncategorized

As the new year (2025) starts, I want to share some insights from my role at Xari. While Javier and I founded the company together (he’s the Chief in Command, and I’ve dubbed myself the Minister of Dark Magic), our rapid growth has made these playful titles more meaningful than we expected.

Among my self-imposed responsibilities are:

- Providing ancient knowledge to the team (I’ve been coding since MS-DOS 6.1 – you do the math!)

- Testing emerging technologies

- Deciphering how and why our systems work

- Achieving the “impossible” (even if impractical, we love proving it can be done)

Our Technical Landscape

As a .NET shop, we develop everything from LOB applications to AI-powered object detection systems and mainframe database connectors. Our preference for C# isn’t just about the language – it’s about the power of the .NET ecosystem itself.

.NET’s architecture, with its intermediate language and JIT compilation, opens up fascinating possibilities for code manipulation. This brings us to one of my favorite features: Reflection, or more broadly, metaprogramming.

Enter Harmony: The Art of Runtime Magic

Harmony is a powerful library that transforms how we approach runtime method patching in .NET applications. Think of it as a sophisticated Swiss Army knife for metaprogramming. But why would you need it?

Real-World Applications

1. Performance Monitoring

[HarmonyPatch(typeof(CriticalService), "ProcessData")]

class PerformancePatch

{

static void Prefix(out Stopwatch __state)

{

__state = Stopwatch.StartNew();

}

static void Postfix(Stopwatch __state)

{

Console.WriteLine($"Processing took {__state.ElapsedMilliseconds}ms");

}

}

2. Feature Toggling in Legacy Systems

[HarmonyPatch(typeof(LegacySystem), "SaveToDatabase")]

class ModernizationPatch

{

static bool Prefix(object data)

{

if (FeatureFlags.UseNewStorage)

{

ModernDbContext.Save(data);

return false; // Skip old implementation

}

return true;

}

}

The Three Pillars of Harmony

Harmony offers three powerful ways to modify code:

1. Prefix Patches

- Execute before the original method

- Perfect for validation

- Can prevent original method execution

- Modify input parameters

2. Postfix Patches

- Run after the original method

- Ideal for logging

- Can modify return values

- Access to execution state

3. Transpilers

- Modify the IL code directly

- Most powerful but complex

- Direct instruction manipulation

- Used for advanced scenarios

Practical Example: Method Timing

Here’s a real-world example we use at Xari for performance monitoring:

[HarmonyPatch(typeof(Controller), "ProcessRequest")]

class MonitoringPatch

{

static void Prefix(out Stopwatch __state)

{

__state = Stopwatch.StartNew();

}

static void Postfix(MethodBase __originalMethod, Stopwatch __state)

{

__state.Stop();

Logger.Log($"{__originalMethod.Name} execution: {__state.ElapsedMilliseconds}ms");

}

}

When to Use Harmony

Harmony shines when you need to:

- Modify third-party code without source access

- Implement system-wide logging or monitoring

- Create modding frameworks

- Add features to sealed classes

- Test legacy systems

The Dark Side of Power

While Harmony is powerful, use it wisely:

- Avoid in production-critical systems where stability is paramount

- Consider simpler alternatives first

- Be cautious with high-performance scenarios

- Document your patches thoroughly

Conclusion

In our work at Xari, Harmony has proven invaluable for solving seemingly impossible problems. While it might seem like “dark magic,” it’s really about understanding and leveraging the powerful features of .NET’s architecture.

Remember: with great power comes great responsibility. Use Harmony when it makes sense, but always consider simpler alternatives first. Happy coding!

by Joche Ojeda | Jan 12, 2025 | ADO.NET, C#, CPU, dotnet, ORM, XAF, XPO

Introduction

In the .NET ecosystem, “AnyCPU” is often considered a silver bullet for cross-platform deployment. However, this assumption can lead to significant problems when your application depends on native assemblies. In this post, I want to share a personal story that highlights how I discovered these limitations and how native dependencies affect the true portability of AnyCPU applications, especially for database access through ADO.NET and popular ORMs.

My Journey to Understanding AnyCPU’s Limitations

Every year, around Thanksgiving or Christmas, I visit my friend, brother, and business partner Javier. Two years ago, during one of these visits, I made a decision that would lead me to a pivotal realization about AnyCPU architecture.

At the time, I was tired of traveling with my bulky MSI GE72 Apache Pro-24 gaming laptop. According to MSI’s official specifications, it weighed 5.95 pounds—but that number didn’t include the hefty charger, which brought the total to around 12 pounds. Later, I upgraded to an MSI GF63 Thin, which was lighter at 4.10 pounds—but with the charger, it was still around 7.5 pounds. Lugging these laptops through airports felt like a workout.

Determined to travel lighter, I purchased a MacBook Air with the M2 chip. At just 2.7 pounds, including the charger, the MacBook Air felt like a breath of fresh air. The Apple Silicon chip was incredibly fast, and I immediately fell in love with the machine.

Having used a MacBook Pro with Bootcamp and Windows 7 years ago, I thought I could recreate that experience by running a Windows virtual machine on my MacBook Air to check projects and do some light development while traveling.

The Virtualization Experiment

As someone who loves virtualization, I eagerly set up a Windows virtual machine on my MacBook Air. I grabbed my trusty Windows x64 ISO, set up the virtual machine, and attempted to boot it—but it failed. I quickly realized the issue was related to CPU architecture. My x64 ISO wasn’t compatible with the ARM-based M2 chip.

Undeterred, I downloaded a Windows 11 ISO for ARM architecture and created the VM. Success! Windows was up and running, and I installed Visual Studio along with my essential development tools, including DevExpress XPO (my favorite ORM).

The Demo Disaster

The real test came during a trip to Dubai, where I was scheduled to give a live demo showcasing how quickly you can develop Line-of-Business (LOB) apps with XAF. Everything started smoothly until I tried to connect my XAF app to the database. Despite my best efforts, the connection failed.

In the middle of the demo, I switched to an in-memory data provider to salvage the presentation. After the demo, I dug into the issue and realized the root cause was related to the CPU architecture. The native database drivers I was using weren’t compatible with the ARM architecture.

A Familiar Problem

This situation reminded me of the transition from x86 to x64 years ago. Back then, I encountered similar issues where native drivers wouldn’t load unless they matched the process architecture.

The Native Dependency Challenge

Platform-Specific Loading Requirements

Native DLLs must exactly match the CPU architecture of your application:

- If your app runs as x86, it can only load x86 native DLLs.

- If running as x64, it requires x64 native DLLs.

- ARM requires ARM-specific binaries.

- ARM64 requires ARM64-specific binaries.

There is no flexibility—attempting to load a DLL compiled for a different architecture results in an immediate failure.

How Native Libraries are Loaded

When your application loads a native DLL, the operating system follows a specific search pattern:

- The application’s directory

- System directories (System32/SysWOW64)

- Directories listed in the PATH environment variable

Crucially, these native libraries must match the exact architecture of the running process.

// This seemingly simple code

[DllImport("native.dll")]

static extern void NativeMethod();

// Actually requires:

// - native.dll compiled for x86 when running as 32-bit

// - native.dll compiled for x64 when running as 64-bit

// - native.dll compiled for ARM64 when running on ARM64

The SQL Server Example

Let’s look at SQL Server connectivity, a common scenario where the AnyCPU illusion breaks down:

// Traditional ADO.NET connection

using (var connection = new SqlConnection(connectionString))

{

// This requires SQL Native Client

// Which must match the process architecture

await connection.OpenAsync();

}

Even though your application is compiled as AnyCPU, the SQL Native Client must match the process architecture. This becomes particularly problematic on newer architectures like ARM64, where native drivers may not be available.

Impact on ORMs

Entity Framework Core

Entity Framework Core, despite its modern design, still relies on database providers that may have native dependencies:

public class MyDbContext : DbContext

{

protected override void OnConfiguring(DbContextOptionsBuilder optionsBuilder)

{

// This configuration depends on:

// 1. SQL Native Client

// 2. Microsoft.Data.SqlClient native components

optionsBuilder.UseSqlServer(connectionString);

}

}

DevExpress XPO

DevExpress XPO faces similar challenges:

// XPO configuration

string connectionString = MSSqlConnectionProvider.GetConnectionString("server", "database");

XpoDefault.DataLayer = XpoDefault.GetDataLayer(connectionString, AutoCreateOption.DatabaseAndSchema);

// The MSSqlConnectionProvider relies on the same native SQL Server components

Solutions and Best Practices

1. Architecture-Specific Deployment

Instead of relying on AnyCPU, consider creating architecture-specific builds:

<PropertyGroup>

<Platforms>x86;x64;arm64</Platforms>

<RuntimeIdentifiers>win-x86;win-x64;win-arm64</RuntimeIdentifiers>

</PropertyGroup>

2. Runtime Provider Selection

Implement smart provider selection based on the current architecture:

public static class DatabaseProviderFactory

{

public static IDbConnection GetProvider()

{

return RuntimeInformation.ProcessArchitecture switch

{

Architecture.X86 => new SqlConnection(), // x86 native provider

Architecture.X64 => new SqlConnection(), // x64 native provider

Architecture.Arm64 => new Microsoft.Data.SqlClient.SqlConnection(), // ARM64 support

_ => throw new PlatformNotSupportedException()

};

}

}

3. Managed Fallbacks

Implement fallback strategies when native providers aren’t available:

public class DatabaseConnection

{

public async Task<IDbConnection> CreateConnectionAsync()

{

try

{

var connection = new SqlConnection(_connectionString);

await connection.OpenAsync();

return connection;

}

catch (DllNotFoundException)

{

var managedConnection = new Microsoft.Data.SqlClient.SqlConnection(_connectionString);

await managedConnection.OpenAsync();

return managedConnection;

}

}

}

4. Deployment Considerations

- Include all necessary native dependencies for each target architecture.

- Use architecture-specific directories in your deployment.

- Consider self-contained deployment to include the correct runtime.

Real-World Implications

This experience taught me that while AnyCPU provides excellent flexibility for managed code, it has limitations when dealing with native dependencies. These limitations become more apparent in scenarios like cloud deployments, ARM64 devices, and live demos.

Conclusion

The transition to ARM architecture is accelerating, and understanding the nuances of AnyCPU and native dependencies is more important than ever. By planning for architecture-specific deployments and implementing fallback strategies, you can build more resilient applications that can thrive in a multi-architecture world.

by Joche Ojeda | Jan 9, 2025 | dotnet

While researching useful features in .NET 9 that could benefit XAF/XPO developers, I discovered something particularly interesting: Version 7 GUIDs (RFC 9562 specification). These new GUIDs offer a crucial feature – they’re sortable.

This discovery brought me back to an issue I encountered two years ago while working on the SyncFramework. We faced a peculiar problem where Deltas were correctly generated but processed in the wrong order in production environments. The occurrences seemed random, and no clear pattern emerged. Initially, I thought using Delta primary keys (GUIDs) to sort the Deltas would ensure they were processed in their generation order. However, this assumption proved incorrect. Through testing, I discovered that GUID generation couldn’t be trusted to be sequential. This issue affected multiple components of the SyncFramework. Whether generating GUIDs in C# or at the database level, there was no guarantee of sequential ordering. Different database engines could sort GUIDs differently. To address this, I implemented a sequence service as a solution.Enter .NET 9 with its Version 7 GUIDs (conforming to RFC 9562 specification). These new GUIDs are genuinely sequential, making them reliable for sorting operations.

To demonstrate this improvement, I created a test solution for XAF with a custom base object. The key implementation occurs in the OnSaving method:

protected override void OnSaving()

{

base.OnSaving();

if (!(Session is NestedUnitOfWork) && Session.IsNewObject(this) && oid.Equals(Guid.Empty))

{

oid = Guid.CreateVersion7();

}

}

Notice the use of CreateVersion7() instead of the traditional NewGuid(). For comparison, I also created another domain object using the traditional GUID generation:

protected override void OnSaving()

{

base.OnSaving();

if (!(Session is NestedUnitOfWork) && Session.IsNewObject(this) && oid.Equals(Guid.Empty))

{

oid = Guid.NewGuid();

}

}

When creating multiple instances of the traditional GUID domain object, you’ll notice that the greater the time interval between instance creation, the less likely the GUIDs will maintain sequential ordering.

GUID Version 7

GUID Old Version

This new feature in .NET 9 could significantly simplify scenarios where sequential ordering is crucial, eliminating the need for additional sequence services in many cases. Here is the repo on GitHubHappy coding until next time!

Related article

On my GUID, common problems using GUID identifiers | Joche Ojeda

by Joche Ojeda | Jan 2, 2025 | XtraReports

Introduction ?

If you’re familiar with Windows Forms development, transitioning to XtraReports will feel remarkably natural. This guide explores how XtraReports leverages familiar Windows Forms concepts while extending them for robust reporting capabilities.

? Quick Tip: Think of XtraReports as Windows Forms optimized for paper output instead of screen output!

A Personal Journey ✨

Microsoft released .NET Framework in late 2002. At the time, I was a VB6 developer, relying on Crystal Reports 7 for reporting. By 2003, my team was debating whether to transition to this new thing called .NET. We were concerned about VB6’s longevity—thinking it had just a couple more years left. How wrong we were! Even today, VB6 applications are still running in some places (it’s January 2, 2025, as I write this).

Back in the VB6 era, we used the Crystal Reports COM object to integrate reports. When we finally moved to .NET Framework, we performed some “black magic” to continue using our existing 700 reports across nine countries. The decision to fully embrace .NET was repeatedly delayed due to the sheer volume of reports we had to manage. Our ultimate goal was to unify our reporting and parameter forms within a single development environment.

This led us to explore other technologies. While considering Delphi, we discovered DevExpress. My boss procured our first DevExpress .NET license for Windows Forms, marking the start of my adventure with DevExpress and XtraReports. Initially, transitioning from the standalone Crystal Report Designer to the IDE-based XtraReports Designer was challenging. To better understand how XtraReports worked, I decided to write reports programmatically instead of using the visual designer.

Architectural Similarities ?️

XtraReports mirrors many fundamental Windows Forms concepts:

| Source |

Destination |

| XtraReport Class |

Report Designer Surface |

| XtraReport Class |

Control Container |

| XtraReport Class |

Event System |

| XtraReport Class |

Properties Window |

| Control Container |

Labels & Text |

| Control Container |

Tables & Grids |

| Control Container |

Images & Charts |

| Report Designer Surface |

Control Toolbox |

| Report Designer Surface |

Design Surface |

| Report Designer Surface |

Preview Window |

Like how Windows Forms applications start with a Form class, XtraReports begin with an XtraReport base class. Both serve as containers that can:

- Host other controls

- Manage layout

- Handle events

- Support data binding

Visual Designer Experience ?

The design experience remains consistent with Windows Forms:

| Windows Forms |

XtraReports |

| Form Designer |

Report Designer |

| Toolbox |

Report Controls |

| Properties Window |

Properties Grid |

| Component Tray |

Component Tool |

Control Ecosystem ?

XtraReports provides analogous controls to Windows Forms:

// Windows Forms

public partial class CustomerForm : Form

{

private Label customerNameLabel;

private DataGridView orderDetailsGrid;

}

// XtraReports

public partial class CustomerReport : XtraReport

{

private XRLabel customerNameLabel;

private XRTable orderDetailsTable;

}

Common control mappings:

- Label ➡️ XRLabel

- Panel ➡️ XRPanel

- PictureBox ➡️ XRPictureBox

- DataGridView ➡️ XRTable

- GroupBox ➡️ Band

- UserControl ➡️ Subreport

Data Binding Patterns ?

The data binding syntax maintains familiarity:

// Windows Forms data binding

customerNameLabel.DataBindings.Add("Text", customerDataSet, "Customers.Name");

// XtraReports data binding

customerNameLabel.ExpressionBindings.Add(

new ExpressionBinding("Text", "[Name]"));

Code Architecture ?️

The code-behind model remains consistent:

public partial class CustomerReport : DevExpress.XtraReports.UI.XtraReport

{

public CustomerReport()

{

InitializeComponent(); // Familiar Windows Forms pattern

}

private void CustomerReport_BeforePrint(object sender, PrintEventArgs e)

{

// Event handling similar to Windows Forms

// Instead of Form_Load, we have Report_BeforePrint

}

}

Key Differences ⚡

While similarities abound, important differences exist:

- Output Focus ?️

- Windows Forms: Screen-based interaction

- XtraReports: Print/export optimization

- Layout Model ?

- Windows Forms: Flexible screen layouts

- XtraReports: Page-based layouts with bands

- Control Behavior ?

- Windows Forms: Interactive controls

- XtraReports: Display-oriented controls

- Data Processing ?️

- Windows Forms: Real-time data interaction

- XtraReports: Batch data processing

Some Advices ?

- Design Philosophy

// Think in terms of paper output

public class InvoiceReport : XtraReport

{

protected override void OnBeforePrint(PrintEventArgs e)

{

// Calculate page breaks

// Optimize for printing

}

}

- Layout Strategy

- Use bands for logical grouping

- Consider paper size constraints

- Plan for different export formats

- Data Handling

- Pre-process data when possible

- Use calculated fields for complex logic

- Consider subreports for complex layouts

by Joche Ojeda | Dec 2, 2024 | Blazor

Over time, I transitioned to using the first versions of my beloved framework, XAF. As you might know, XAF generates a polished and functional UI out of the box. Using XAF made me more of a backend developer since most of the development work wasn’t visual—especially in the early versions, where the model designer was rudimentary (it’s much better now).

Eventually, I moved on to developing .NET libraries and NuGet packages, diving deep into SOLID design principles. Fun fact: I actually learned about SOLID from DevExpress TV. Yes, there was a time before YouTube when DevExpress posted videos on technical tasks!

Nowadays, I feel confident creating and publishing my own libraries as NuGet packages. However, my “old monster” was still lurking in the shadows: UI components. I finally decided it was time to conquer it, but first, I needed to choose a platform. Here were my options:

- Windows Forms: A robust and mature platform but limited to desktop applications.

- WPF: A great option with some excellent UI frameworks that I love, but it still feels a bit “Windows Forms-ish” to me.

- Xamarin/Maui: I’m a big fan of Xamarin Forms and Xamarin/Maui XAML, but they’re primarily focused on device-specific applications.

- Blazor: This was the clear winner because it allows me to create desktop applications using Electron, embed components into Windows Forms, or even integrate with MAUI.

Recently, I’ve been helping my brother with a project in Blazor. (He’s not a programmer, but I am.) This gave me an opportunity to experiment with design patterns to get the most out of my components, which started as plain HTML5 pages.

Without further ado, here are the key insights I’ve gained so far.

Building high-quality Blazor components requires attention to both the C# implementation and Razor markup patterns. This guide combines architectural best practices with practical implementation patterns to create robust, reusable components.

1. Component Architecture and Organization

Parameter Organization

Start by organizing parameters into logical groups for better maintainability:

public class CustomForm : ComponentBase

{

// Layout Parameters

[Parameter] public string Width { get; set; }

[Parameter] public string Margin { get; set; }

[Parameter] public string Padding { get; set; }

// Validation Parameters

[Parameter] public bool EnableValidation { get; set; }

[Parameter] public string ValidationMessage { get; set; }

// Event Callbacks

[Parameter] public EventCallback<bool> OnValidationComplete { get; set; }

[Parameter] public EventCallback<string> OnSubmit { get; set; }

}

Corresponding Razor Template

<div class="form-container" style="width: @Width; margin: @Margin; padding: @Padding">

<form @onsubmit="HandleSubmit">

@if (EnableValidation)

{

<div class="validation-message">

@ValidationMessage

</div>

}

@ChildContent

</form>

</div>

2. Smart Default Values and Template Composition

Component Implementation

public class DataTable<T> : ComponentBase

{

[Parameter] public int PageSize { get; set; } = 10;

[Parameter] public bool ShowPagination { get; set; } = true;

[Parameter] public string EmptyMessage { get; set; } = "No data available";

[Parameter] public IEnumerable<T> Items { get; set; } = Array.Empty<T>();

[Parameter] public RenderFragment HeaderTemplate { get; set; }

[Parameter] public RenderFragment<T> RowTemplate { get; set; }

[Parameter] public RenderFragment FooterTemplate { get; set; }

}

Razor Implementation

<div class="table-container">

@if (HeaderTemplate != null)

{

<header class="table-header">

@HeaderTemplate

</header>

}

<div class="table-content">

@if (!Items.Any())

{

<div class="empty-state">@EmptyMessage</div>

}

else

{

@foreach (var item in Items)

{

@RowTemplate(item)

}

}

</div>

@if (ShowPagination)

{

<div class="pagination">

<!-- Pagination implementation -->

</div>

}

</div>

3. Accessibility and Unique IDs

Component Implementation

public class FormField : ComponentBase

{

private string fieldId = $"field-{Guid.NewGuid():N}";

private string labelId = $"label-{Guid.NewGuid():N}";

private string errorId = $"error-{Guid.NewGuid():N}";

[Parameter] public string Label { get; set; }

[Parameter] public string Error { get; set; }

[Parameter] public bool Required { get; set; }

}

Razor Implementation

<div class="form-field">

<label id="@labelId" for="@fieldId">

@Label

@if (Required)

{

<span class="required" aria-label="required">*</span>

}

</label>

<input id="@fieldId"

aria-labelledby="@labelId"

aria-describedby="@errorId"

aria-required="@Required" />

@if (!string.IsNullOrEmpty(Error))

{

<div id="@errorId" class="error-message" role="alert">

@Error

</div>

}

</div>

4. Virtualization and Performance

Component Implementation

public class VirtualizedList<T> : ComponentBase

{

[Parameter] public IEnumerable<T> Items { get; set; }

[Parameter] public RenderFragment<T> ItemTemplate { get; set; }

[Parameter] public int ItemHeight { get; set; } = 50;

[Parameter] public Func<ItemsProviderRequest, ValueTask<ItemsProviderResult<T>>> ItemsProvider { get; set; }

}

Razor Implementation

<div class="virtualized-container" style="height: 500px; overflow-y: auto;">

<Virtualize Items="@Items"

ItemSize="@ItemHeight"

ItemsProvider="@ItemsProvider"

Context="item">

<ItemContent>

<div class="list-item" style="height: @(ItemHeight)px">

@ItemTemplate(item)

</div>

</ItemContent>

<Placeholder>

<div class="loading-placeholder" style="height: @(ItemHeight)px">

<div class="loading-animation"></div>

</div>

</Placeholder>

</Virtualize>

</div>

Best Practices Summary

1. Parameter Organization

- Group related parameters with clear comments

- Provide meaningful default values

- Use parameter validation where appropriate

2. Template Composition

- Use RenderFragment for customizable sections

- Provide default templates when needed

- Enable granular control over component appearance

3. Accessibility

- Generate unique IDs for form elements

- Include proper ARIA attributes

- Support keyboard navigation

4. Performance

- Implement virtualization for large datasets

- Use loading states and placeholders

- Optimize rendering with appropriate conditions

Conclusion

Building effective Blazor components requires attention to both the C# implementation and Razor markup. By following these patterns and practices, you can create components that are:

- Highly reusable

- Performant

- Accessible

- Easy to maintain

- Flexible for different use cases

Remember to adapt these practices to your specific needs while maintaining clean component design principles.