All topics

220 articles.

Browse by topic

Articles

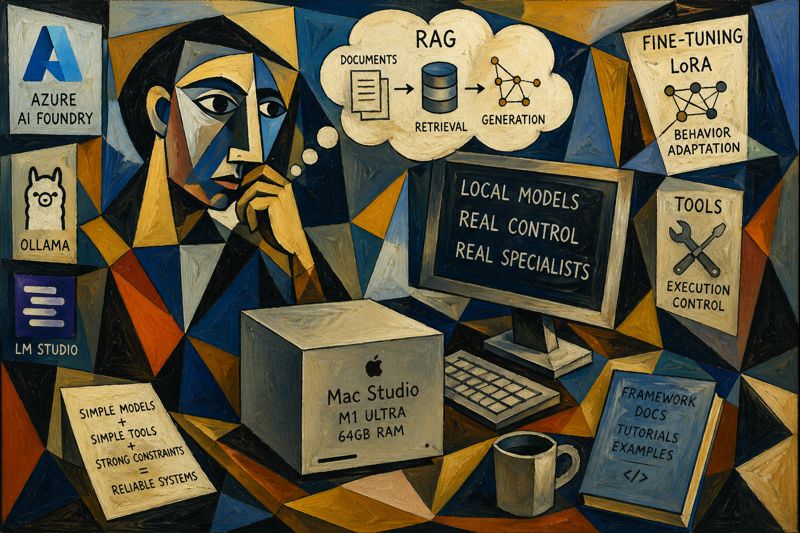

From Frontier Models to Local Specialists: My Mac Studio Experiment

After getting access to a Mac Studio in Arizona, I explored running local AI models using tools like Ollama and LM Studio. Instead of chasing frontier-level intelligence, I focused on turning small open-source models into reliable specialists using RAG, fine-tuning, and constrained tools—prioritizing control, predictability, and real-world usefulness.

How CLI Tools Can Drastically Reduce Token Consumption ⚡

After realizing that traditional tool schemas were burning tokens, I started looking for a lighter approach. CLI-style tools turned out to be a practical solution. By replacing verbose JSON schemas with simple commands, I reduced prompt size, improved tool selection, and made agents cheaper, faster, and easier to scale.

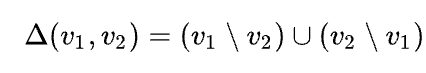

From “Hello” to Quota Exceeded: The Day My Agent Broke 💥

After building an agent with 50 tools, I expected powerful automation. Instead, users hit quota limits after saying “Hello.” The problem wasn’t usage, it was hidden context cost. Every tool was injected into every request, burning tokens instantly. This experience revealed why tool design and loading strategy matter for scalable, efficient AI agents.

The Day I Integrated GitHub Copilot SDK Inside My XAF App (Part 2)

In this second part, I integrate the GitHub Copilot SDK directly into a DevExpress XAF application, transforming a traditional business app into an AI-powered assistant. Using real tools, EF Core data, and DevExpress AI components, the system enables natural language interaction, record creation, and insights inside both Blazor and WinForms interfaces.

The Day I Integrated GitHub Copilot SDK Inside My XAF App (Part 1)

This week, while studying Russian every day, I noticed I kept relying on GitHub Copilot inside VS Code more than anything else. That curiosity turned into an experiment: what if Copilot lived inside my XAF apps? It worked… after a four-hour rabbit hole of model-related timeouts.

Closing the Loop with AI (part 3): Moving the Human to the End of the Pipeline

Closing the loop with AI means moving the human to the end of the pipeline. Let agents write, run, test, read logs, inspect state, and iterate without you acting as QA. With Serilog, SQLite, and Playwright, the loop becomes observable and repeatable—until you only validate outcomes, not steps.

Closing the Loop (Part 2): So Far, So Good — and Yes, It’s Token Hungry

Closing the loop is working better than I expected. Copilot writes code, runs Playwright tests, reads Serilog logs, checks screenshots, fixes bugs, and retries without me babysitting it. I’m writing this on my MacBook Air while my Surface runs tests. The only downside: it’s extremely token hungry.

Closing the Loop: Letting AI Finish the Work

Getting sick on a ski trip led to an unexpected realization: the future of AI-assisted development isn’t just generating code faster, but closing the loop. By giving agents the ability to test, fail, and self-correct, we can move from endless prompting to true autonomous engineering — where humans define outcomes, not implementations.

Github Copilot for the Rest of Us

GitHub Copilot isn’t just a code writer—it’s a context-aware work partner inside VS Code. With terminals, files, and Remote SSH, it helps diagnose and set up Linux servers, draft runbooks, and organize creative projects like storybooks. Treat it like a workspace companion, and the use cases multiply fast.

The Mirage of a Memory Leak (or: why “it must be the framework” is usually wrong)

Memory leaks in managed runtimes are often mirages. What looks like a broken framework is usually memory retention caused by our own code: forgotten event unsubscriptions, captured lambdas, static references, and background services. Follow the GC roots, not the blame, and the illusion disappears.

As an XAF Developer, What Should I Actually Test?

With AI making code cheaper than ever, the real challenge is no longer writing features but protecting business decisions. In XAF applications, effective testing means focusing on your logic, not the framework. Test services and decisions, isolate XAF with seams, use adapters wisely, and rely on integration tests for confidence

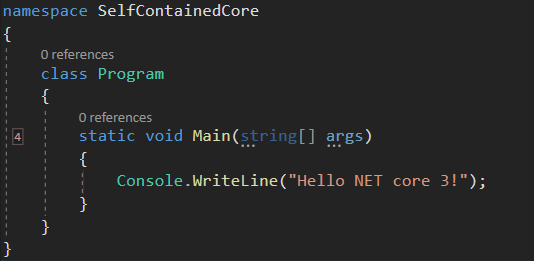

Application Installers and Assembly Resolution Using the Legacy .NET Framework

A real-world look at deploying legacy .NET Framework applications. From assembly probing and the GAC to installers that expose hidden dependencies, this article explains why copying DLLs is not deployment—and how understanding runtime resolution rules turns fragile brownfield systems into predictable, maintainable software.

Greenfield vs Brownfield: How AI Changed the Way I Build and Rescue Software

Greenfield projects let us design clean architectures from day one. Brownfield projects force us to face history, shortcuts, and technical debt. With AI, that trade-off changes. Today we can modernize fragile legacy systems safely—adding tests, improving structure, and delivering real business value without risky rewrites.

The DLL Registration Trap in Legacy .NET Framework Applications

If you’ve ever worked on a traditional .NET Framework application — the kind that predates .NET Core and .NET 5+ — this story may feel painfully familiar. I’m talking about classic .NET Framework 4.x applications (4.0, 4.5, 4.5.1, 4.5.2, 4.6, 4.6.1, 4.6.2, 4.7, 4.7.1, 4.7.2, 4.8, and the final release 4.8.1). These systems often live […]

ConfigureAwait(false): Why It Exists, What It Solves, and When Context Is the Real Bug

Async bugs in C# are often context bugs. ConfigureAwait(false) doesn’t magically fix deadlocks, but it limits the damage when async code is blocked. This article explains context capture, blast radius, and a real production incident where the true fix was using the correct framework synchronization context.

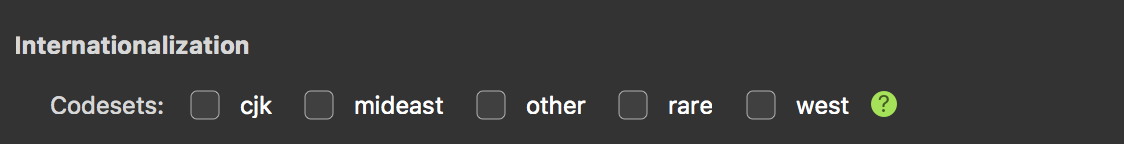

Structured RAG for Unknown and Mixed Languages

This article shows how naïve RAG fails on multilingual activity streams and why structure is the real fix. By extracting stable metadata into a JSON schema at write time, RAG becomes predictable again. Structured RAG trades extra processing for accuracy, debuggability, and reliable retrieval across mixed and unknown languages.

RAG with PostgreSQL and C# (pros and cons)

This article was born from a real failure while applying RAG to an activity stream. Multilingual, unstructured user content broke naïve retrieval. The experience exposed how fragile RAG can be without structure, language awareness, and disciplined pipelines—lessons learned only after deploying RAG in a real system.

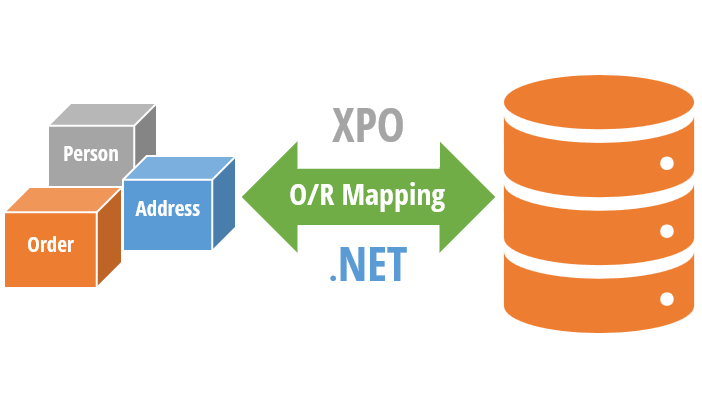

Accessing Legacy Data (Fox pro) with XPO Using a Custom ODBC Provider

Accessing legacy data doesn’t require rewriting old systems. This article explains how XPO and ODBC can be combined to integrate FoxPro, AS400, and DB2 databases into modern .NET architectures, enabling the use of Blazor, .NET MAUI, and even AI agents while respecting legacy dialects and type systems.

ODBC: A Standard That Was Never Truly Neutral

ODBC has been connecting applications to databases for decades. From FoxPro’s all-in-one world to modern .NET systems, this article explores what ODBC really is, why it still matters, and how it helps reduce database dependencies while improving portability and long-term maintainability in enterprise software.

Oqtane Event System — Hooking into the Framework

Learn how Oqtane’s event system works and how to hook into it using event subscribers. This guide covers user creation, login, and custom module events — with working C# examples and best practices for building reactive, event-driven modules.

Oqtane Silent Installation Guide

While working on Oqtane prototypes, I kept running into the setup wizard again and again — so I decided to automate the whole thing. This article shows how to configure a silent installation using the appsettings.json file, define default themes, containers, and templates, and save hours when spinning up new Oqtane sites for testing or deployment.

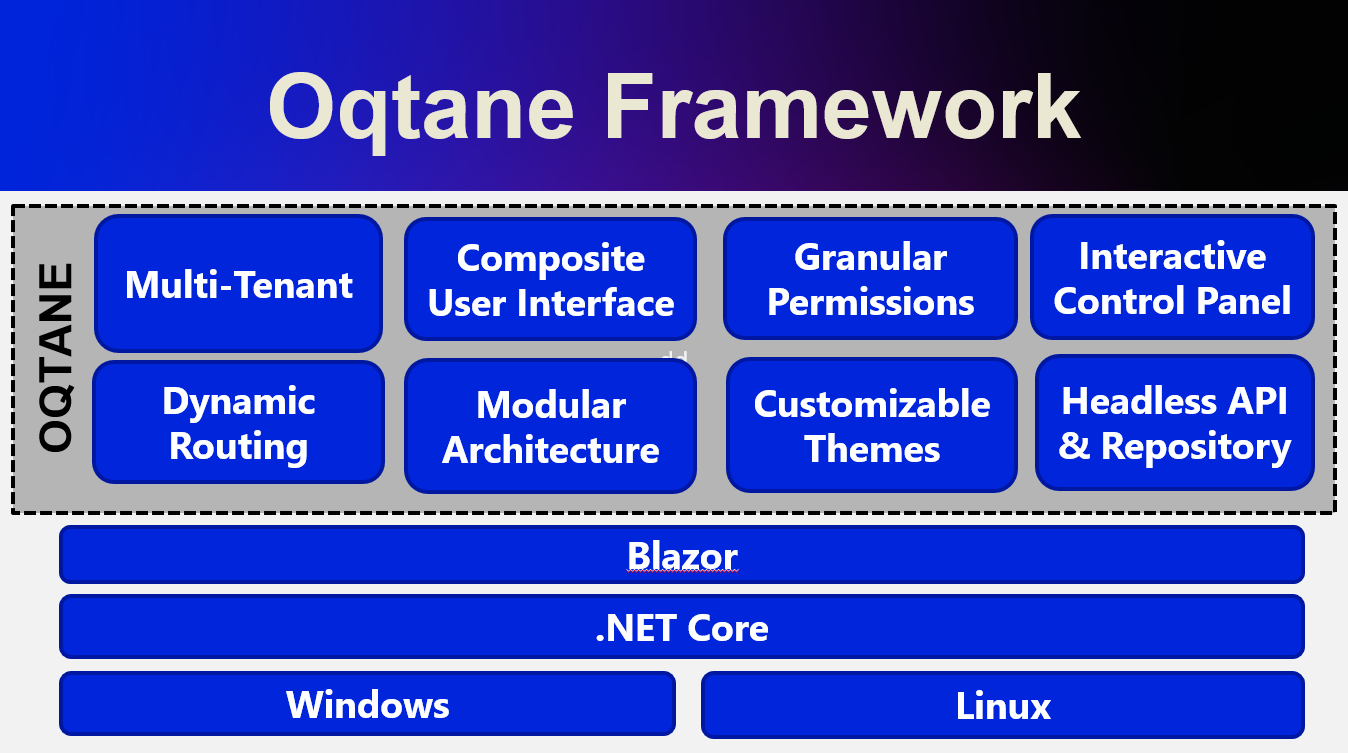

My Journey Exploring the Oqtane Framework

A developer’s field notes on learning Oqtane by reading source code, mapping lessons from XAF, and aiming for a single .NET codebase across client, server, and mobile—plus a personal update: I’m about to start learning Russian at the University of St. Petersburg.

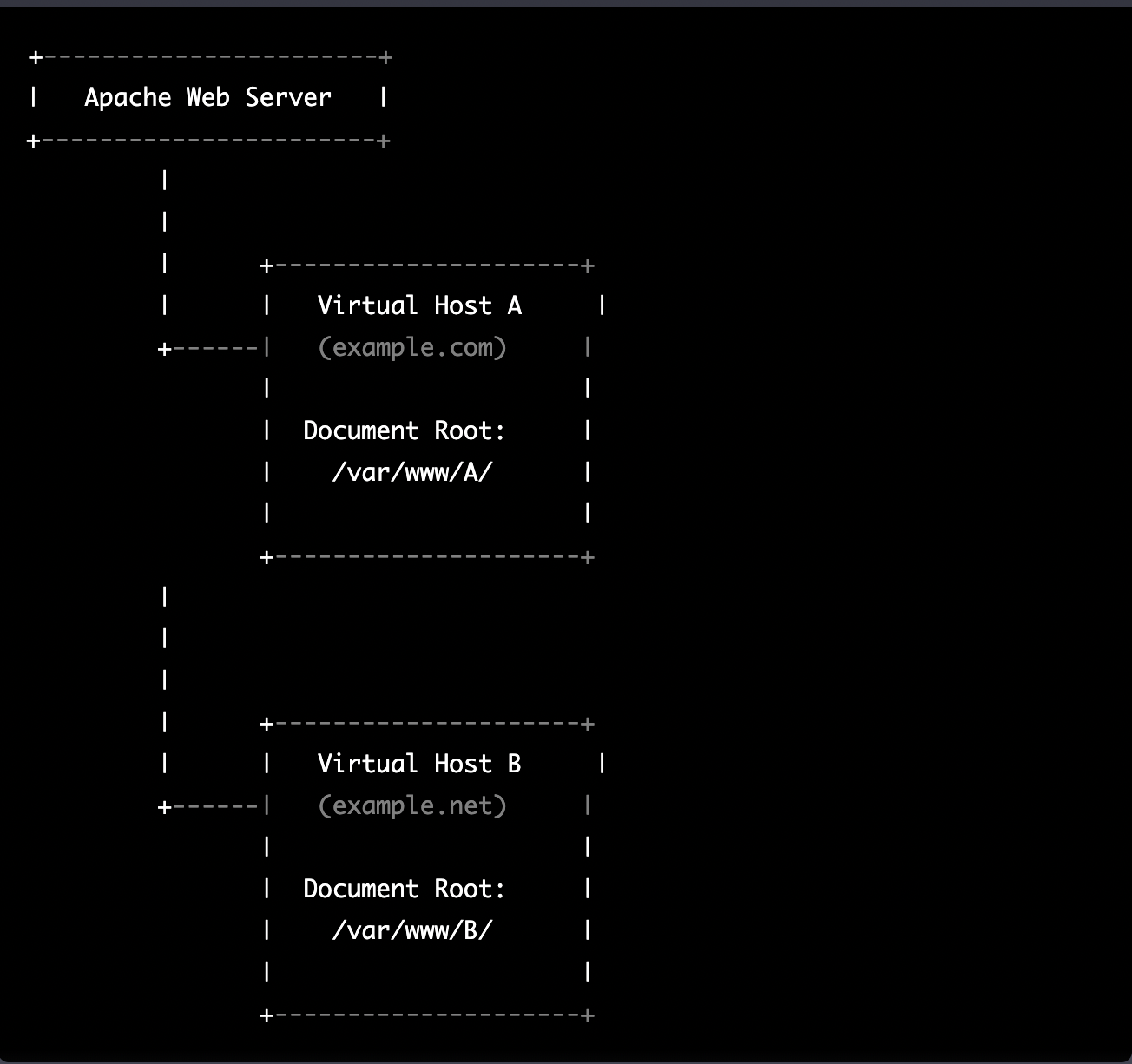

Setting Up Hostnames for Multi-Tenant Sites in Oqtane

Learn how to configure hostnames for multi-tenant sites in Oqtane using the Windows hosts file. This guide walks you through simulating local domains, routing tenants correctly, and understanding how DNS and web servers like Apache or Nginx handle requests — all explained with real examples and a bit of personal history.

Understanding Multi-Tenancy in Oqtane (and How to Set Up Sites)

Exploring how Oqtane handles multi-tenancy — setting up multiple sites within a single installation using SQLite, each with its own configuration, theme, and database options.

Oqtane Notes: Understanding Site Settings vs. App Settings for Hosting Models and Render Modes

After countless Oqtane installs, I finally learned how its configuration really works. The values in appsettings.json only apply when creating a new site — once it’s running, all runtime and render mode changes must be made from the admin panel. This post explains that subtle but important difference.

Setting Up Your Oqtane Database: First Run and Configuration

Learn how to set up your Oqtane database for the first time using either the built-in setup wizard or a manual configuration in appsettings.json. This guide walks you through each step to get your Oqtane installation running smoothly and ready for backend exploration in the next article.

Getting Started with Oqtane 6.2.x

Returning to Oqtane after some time away, I’m documenting my journey through this powerful .NET-based framework. As our office explores new development options that keep us close to the .NET ecosystem, Oqtane stands out as a compelling choice for production-sized projects. This first article in the series tackles the fundamental question every developer faces: how do you actually install and set up Oqtane? From cloning the GitHub repository to using the simplified .NET templates, I’ll walk you through two different approaches—each with its own advantages depending on your experience level and project goals. Whether you’re a beginner looking for the quickest path to productivity or an experienced developer wanting to understand the framework’s layered architecture, this guide provides the essential steps and insights to get you started with Oqtane development.

The Dangers (and Joys) of Vibe Coding

After a 4:30 a.m. coffee, I fell into a five-hour vibe-coding session that turned a small tweak into a full-blown app rewrite. This article reflects on the joy and danger of coding in flow, the lessons learned, and why sometimes you need a teammate to say, “It’s done.”

From Weasel to Sequel to “Speckified”: How Developers Twist Acronyms

Developers love twisting acronyms into funny nicknames. WSL becomes “weasel,” SQL turns into a “sequel robot,” and GitHub’s Spec Kit inspires the spooky word “speckified.” In English and Spanish, these pronunciations diverge, but the memes bring everyone together. Meet the weasel, the robot, and the wizard casting specs.

From Airport Chaos to Spec Clarity: How Writing Requirements Saved My Sanity

Ever tried vibe coding while traveling? Between airports, bad Wi-Fi, and half-baked prompts, I learned the hard way that AI doesn’t need more code—it needs better requirements. Thanks to GitHub’s Spec Kit and insights from James Montemagno and Frank Kruger on the Merge Conflict podcast, I discovered that the real magic isn’t in writing code—it’s in writing clarity. Humans reduce entropy. AI executes it.

From Vibe Coding to Vibe Documenting: How I Turned 6 Hours of Chaos into 8 Minutes of Clarity

Most programmers have fallen into the trap of “vibe coding”—throwing half-baked requirements at AI assistants and hoping for magic. I recently spent six hours vibe coding an Oqtane activity stream module, generating lots of code but making little real progress. Then I switched approaches. Instead of letting the AI guess, I documented exactly what I needed: module structure, display requirements, and integration points. The result? In eight minutes, I had a clean, working solution. The lesson is clear: AI is only as good as the clarity of its input. Humans reduce chaos; AI executes clarity.

DevExpress Documentations is now accessible as an MCP server

DevExpress users can now supercharge their GitHub Copilot experience with the new Documentation MCP server. Simply enable agent mode, create a .mcp.json configuration file, and add “Use dxdocs” to your prompts. This preview feature provides AI-powered access to DevExpress documentation, making XAF development faster and more intelligent than ever.

Understanding Keycloak: An Identity Management Solution for .NET Developers

Keycloak is an open-source Identity and Access Management solution that provides centralized authentication and Single Sign-On capabilities across multiple applications. For .NET developers, it offers seamless integration through standard protocols like OpenID Connect, eliminating authentication fatigue while providing cost-effective, vendor-independent identity management with extensive customization options for modern applications.

MailHog: The Essential Email Testing Tool for .NET Developers

MailHog is an essential open-source email testing tool that captures emails sent by your .NET applications instead of delivering them to real recipients. Perfect for testing authentication workflows, password resets, and user registration processes, MailHog provides a clean web interface to view and inspect captured emails in real-time. Easy to install on WSL using an automated script, it integrates seamlessly with System.Net.Mail and works as a drop-in replacement for production SMTP servers. With features like API access, message persistence, and failure testing, MailHog eliminates the risks and complexity of email testing during development.

Understanding the N+1 Database Problem using Entity Framework Core

The N+1 database problem is a performance killer that silently destroys application speed. This comprehensive test suite demonstrates how innocent-looking code can generate hundreds of unnecessary database queries instead of one efficient query. Through 12 detailed test cases using Entity Framework Core, we explore the difference between problematic lazy loading approaches that create 4+ queries and optimized solutions using Include() that require just 1 query. Real examples show 75% performance improvements, with actual SQL output revealing what happens under the hood. Learn projection, eager loading, split queries, and batch loading patterns to build applications that stay fast as they scale.

Day 4 (the missing day): Building Data Import/Export Services for Your ERP System

In Day 4 of our ERP development series, we tackle a crucial but often overlooked feature: data import/export services. Learn how to build robust CSV import/export functionality for Chart of Accounts, including error handling, validation, and testing strategies that you can apply throughout your enterprise system.

Building a Comprehensive Accounting System Integration Test – Day 5

This article explores the implementation of a comprehensive integration test for an accounting system, demonstrating how Document and Chart of Accounts modules work together. Using a collection-based approach to simulate database interactions, the test validates double-entry accounting principles while ensuring proper transaction processing and balance verification across various business scenarios.

Understanding the Chart of Accounts Module: Day 3 – The Backbone of Financial Accounting Systems

The chart of accounts module forms the foundation of any accounting system, organizing financial transactions by categorizing accounts. A well-designed implementation includes entity definitions, type enumerations, validation logic, and balance calculation functionality—all working together to support accurate financial reporting while adhering to SOLID design principles.

Understanding the Document Module: Day 2 – The Foundation of a Financial Accounting System

The Document Module forms the foundation of financial accounting systems, organizing financial data into three interconnected components: Documents (the source records), Transactions (financial impacts), and Ledger Entries (account changes). This modular architecture ensures data integrity, maintains audit trails, supports compliance, and enables flexible integration of various financial processes.

Building an Agnostic ERP System: Day 1 – Core Architecture

Building an agnostic ERP system using SOLID design principles and C# 9 addresses recurring challenges in system design. The core architecture features foundational interfaces like IEntity, IAuditable, and IArchivable, creating a technology-independent foundation that maintains consistent performance while enabling reimplementation across various platforms like DevExpress XAF or Entity Framework.

The Anatomy of an Uno Platform Solution

Understanding the structure of an Uno Platform solution is essential for effective cross-platform development. This article examines the “black magic” behind Uno Platform’s architecture, breaking down the main components: the shared project containing cross-platform code, platform-specific head projects, and critical build configuration files. We explore the power of Uno.Sdk, which simplifies development with automatic package management through UnoFeatures, target framework specifications, and single-project configuration. By leveraging this structured approach, developers can maintain a single codebase targeting multiple platforms, dramatically reducing complexity while ensuring consistent experiences across Windows, iOS, Android, macOS, and WebAssembly.

Running Docker on a Windows Surface ARM64 Using WSL2

Discover how to install and run Docker Community Edition on a Microsoft Surface with ARM64 architecture using Windows Subsystem for Linux 2 (WSL2). This step-by-step guide walks you through the entire process, from preparing your WSL2 environment to verifying your Docker installation is working properly. Perfect for developers with ARM-based Windows devices looking to leverage containerization without compatibility headaches.

Testing SignalR Applications with Integration Tests

Testing SignalR chat applications requires a different approach than traditional API testing. By creating a test host server with a handler instead of an HTTP client, we can simulate real-world scenarios where messages are sent through SignalR hubs, allowing us to verify the complete set of moving parts

My Microsoft MVP Summit Experience

It’s been a week since the Microsoft MVP Summit. In Istanbul, I was lucky to rest in the airport’s business lounge before my 15-hour flight to Seattle. After overcoming arrival challenges, I enjoyed meaningful conversations with Jerome from Uno, Mads Kristensen, Jeremy Sinclair, and James Montemagno.

Getting Started with Uno Platform: First Steps and Configuration Choices

Discover the essentials of creating applications with Uno Platform. This guide walks you through the initial setup process, from project creation to configuration choices, helping you navigate framework selection, target platforms, presentation patterns, and more. Learn from my two-week journey and make informed decisions for your Uno Platform projects.

Connecting WASM Apps to APIs: Overcoming CORS Challenges

Connecting WebAssembly apps to APIs can be challenging due to CORS restrictions. After struggling with WCF services, I discovered that Uno Platform makes this integration surprisingly seamless. Understanding CORS is essential—it’s a security feature that controls cross-origin requests in browsers, requiring proper server configuration to enable WASM-to-API communication.

DNS and Virtual Hosting: A Personal Journey

Discover how DNS enables multiple websites to share a single IP address through virtual hosting. Learn from my journey from teenage networking experiments to professional server management, and see how the Windows hosts file can be your secret weapon for local development and troubleshooting without affecting live environments.

Troubleshooting MAUI Android HTTP Client Issues: Native vs Managed Implementation

When your MAUI Android app connects to public APIs but fails with internal network services, HTTP client implementation differences are often the culprit. By switching between native and managed HTTP clients and addressing certificate validation and TLS compatibility issues, you can identify and resolve these common networking challenges.

My Adventures Picking a UI Framework: Why I Chose Uno Platform

This year I challenged myself to learn UI development after years of back-end coding. After considering Flutter and React, I rediscovered Uno Platform. With improved tooling and seamless integration with my .NET expertise, Uno became my choice. I’ll document my journey creating multi-platform applications using this promising framework.

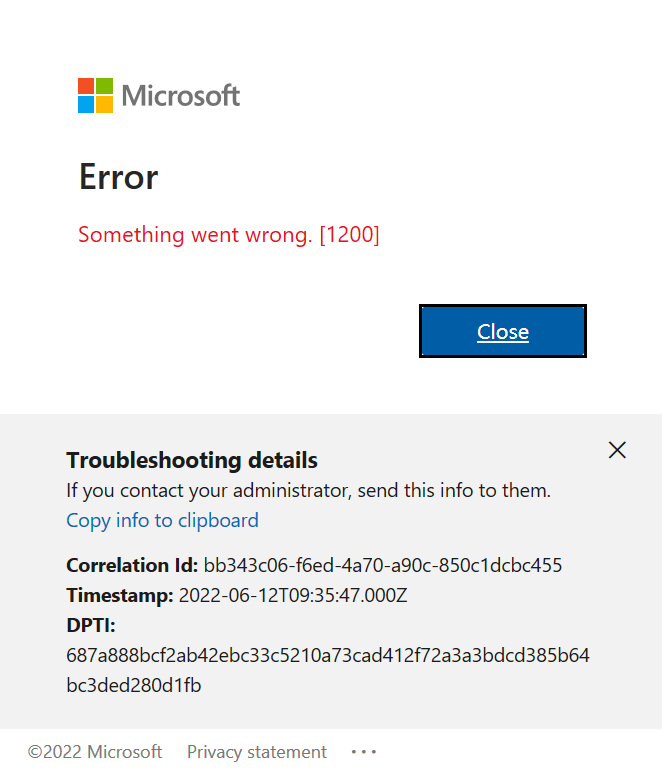

Visual Studio Sign-In Issues: A Simple Fix (Fixing visual studio sign in error Code: 3399680404 )

Frustrated with Visual Studio sign-in issues? Despite resetting my computer, I couldn’t change accounts until I discovered a simple fix. Navigate to Options > Environment > Accounts and change authentication from ‘Windows authentication broker’ to ‘Embedded web browser.’ This small adjustment solved my persistent problem and might work for you too!

Exploring the Uno Platform: Handling Unsafe Code in Multi-Target Applications

This weekend I experimented with the Uno platform, a multi-OS UI framework for developing mobile, desktop, web, and Linux applications from a single codebase. While Uno’s concept is excellent, its tooling previously had stability issues after Visual Studio updates. Surprisingly, I found setup now works effortlessly on both my ARM-based Surface laptop and x64 MSI computer. However, when compiling demo applications, I encountered issues with unsafe code generation. The solution involves uncommenting the true setting in the project file. This enables C# unsafe code blocks necessary for pointer operations, fixed statements, and direct memory manipulation required by some Uno platform components.

State Machines and Wizard Components: A Clean Implementation Approach

This article explores implementing wizard components using state machine architecture. By separating UI from logic, developers can create cleaner, more maintainable multi-step forms. The approach centralizes state control through a WizardStateMachineBase class that manages page transitions, significantly simplifying development challenges and creating extensible interfaces that enhance user experience by limiting decisions at each step.

Windows Server Setup Guide with PowerShell

Server scripting solutions for Windows 2016 setup: Streamline your Windows Server deployment with automation scripts that tackle common pain points. Learn how to efficiently disable IE Enhanced Security, install Web Server roles with Web Deploy, fix permission issues, set up SQL Server Express, and enable remote database access—all without endless UI clicking.

Setting Up WSL 2: My Development Environment Scripts

After a problematic Windows update on my Surface computer that prevented me from compiling .NET applications, I spent days trying various fixes without success. Eventually, I had to format my computer and start fresh. This meant setting up everything again – Visual Studio, testing databases, and all the other development tools.To make future setups easier, […]

Understanding System Abstractions for LLM Integration

Understanding how to integrate large language models with existing systems requires knowledge of different abstraction levels. From APIs and REST protocols to SDK implementations, developers must choose the appropriate interaction level for their automation needs. Whether using Microsoft Semantic Kernel or Model Context Protocol, the key is selecting the right integration approach for your system.

Bridging Traditional Development using XAF and AI: Training Sessions in Cairo

A transformative training session in Cairo demonstrated how modern application frameworks and AI can revolutionize business software development. By integrating DevExpress’s XAF with Microsoft Semantic Kernel, JavaScript developers discovered powerful alternatives to traditional web development approaches, bridging the gap between conventional LOB applications and AI-powered solutions.

Hard to Kill: Why Auto-Increment Primary Keys Can Make Data Sync Die Harder

Auto-increment primary keys, while popular among developers, can create significant challenges in data synchronization scenarios. Each database engine implements these differently – from SQL Server’s IDENTITY to PostgreSQL’s sequences – making cross-database coordination complex. Understanding these implementations is crucial when designing systems that require reliable data synchronization across distributed environments.

SyncFramework for XPO: Updated for .NET 8 & 9 and DevExpress 24.2.3!

SyncFramework for XPO is a specialized implementation of our delta encoding synchronization library, designed specifically for DevExpress XPO users. It enables efficient data synchronization by tracking and transmitting only the changes between data versions, optimizing both bandwidth usage and processing time. What’s New Base target framework updated to .NET 8.0 Added compatibility with .NET 9.0 […]

SyncFramework Update: Now Supporting .NET 9 and EfCore 9!

SyncFramework Update: Now Supporting .NET 9! SyncFramework is a C# library that simplifies data synchronization using delta encoding technology. Instead of transferring entire datasets, it efficiently synchronizes by tracking and transmitting only the changes between data versions, significantly reducing bandwidth and processing overhead. What’s New All packages now target .NET 9 BIT.Data.Sync packages updated to […]

Say my name: The Evolution of Shared Libraries

From VB6’s COM components to .NET’s GAC and today’s private dependencies, the evolution of shared libraries reflects the changing landscape of software development. In my early career, we faced “DLL Hell” when shared components in Windows System directories would conflict or break multiple applications. The .NET Framework introduced the Global Assembly Cache with unique assembly identities, allowing multiple versions to coexist. Today, with storage being abundant, we’ve moved towards shipping applications with their own private dependencies. This journey shows how solutions evolve not just technically, but in response to real-world problems and changing resources

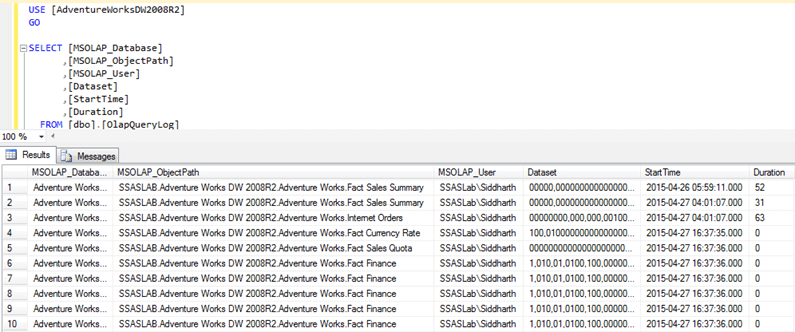

ADOMD.NET: Beyond Rows and Columns – The Multidimensional Evolution of ADO.NET

When I first encountered the challenge of migrating hundreds of Visual Basic 6 reports to .NET, I discovered the power of specialized data analytics tools through ADOMD.NET. The journey began with a seemingly simple “Sales Gap” report that identified periods when regular customers stopped purchasing specific items. As our data grew, the report’s execution time increased from one minute to an unbearable 15 minutes. While we couldn’t implement ADOMD.NET due to database constraints, the investigation taught valuable lessons about choosing the right tools for analytical workloads and understanding the limitations of running complex analytics on transactional databases.

Back to the Future of Dev Tools: DevExpress CLI templates

Technology, like fashion, moves in cycles. Just as bell-bottoms and vinyl records make their comebacks, we’re witnessing a fascinating return to command-line interfaces in software development. While the industry once raced towards graphical interfaces, developers are now embracing CLI tools with renewed enthusiasm. This shift is particularly evident in modern application templates, where DevExpress and others are creating cross-platform solutions that blend the best of both worlds. Through the lens of project templates and development tools, we explore how technology’s future often leads us back to its past.

The Dark Magic of Dynamic Assemblies: A Tale of .NET Emit

Explore the arcane world of runtime code generation in .NET through the lens of a real-world challenge. Journey back to the early 2000s when a seemingly simple task of parsing ERP documentation led to the discovery of Emit – one of .NET’s most powerful and mysterious features. Learn how this fundamental building block of dynamic assembly generation can be both a powerful ally and a dangerous tool in your development arsenal. From legacy system integration to modern alternatives like Source Generators, discover why understanding Emit remains crucial for developers who dare to venture beyond the conventional boundaries of .NET programming.

The Dark Arts of Self-Writing Code: A Journey from DOS to .NET Sorcery

In “The Dark Arts of Self-Writing Code: A Journey from DOS to .NET Sorcery,” the author recounts an inspiring journey from childhood fascination with MS-DOS commands to mastering metaprogramming in .NET. The narrative begins with humble experiments in AUTOEXEC.BAT scripts and evolves through explorations of languages like Turbo Pascal, C++, and C#. The author shares transformative moments, such as learning reflection—a form of “code magic” that enables runtime introspection and modification. Along the way, practical lessons are imparted: cache operations, guard sensitive data, and balance performance costs. Ultimately, the tale emphasizes the power of curiosity and continuous learning in programming mastery.

The Dark Magic of .NET: Exploring Harmony Library in 2025

Explore the dark magic of .NET with Harmony, a powerful library that transforms runtime method patching in C# applications. At Xari, where we tackle everything from LOB applications to AI systems, Harmony has become an invaluable tool in our development arsenal. This sophisticated library offers three powerful approaches to code modification: Prefix patches for pre-execution intervention, Postfix patches for result manipulation, and Transpilers for direct IL code modification. While it might seem like dark magic, Harmony is really about understanding and leveraging .NET’s architecture to achieve what seems impossible, from performance monitoring to legacy system enhancement.

The AnyCPU Illusion: Native Dependencies in .NET Applications

This article explores the limitations of the AnyCPU configuration in .NET applications, particularly when dealing with native dependencies. The author shares a personal story about a realization during a trip, highlighting the challenges of running x64 applications on ARM-based systems like Apple Silicon. The narrative transitions into technical insights about native DLL loading requirements and architecture-specific considerations. It emphasizes the importance of native driver compatibility for ORMs like Entity Framework Core and DevExpress XPO. Solutions such as architecture-specific deployments and managed fallbacks are proposed, making it clear that AnyCPU is not a universal solution for cross-platform development.

Exploring .NET 9’s Sequential GUIDs: A Game-Changer for XAF/XPO Developers

Exploring .NET 9’s latest features reveals an exciting addition for XAF/XPO developers: Version 7 GUIDs (RFC 9562 specification). This new implementation solves a common challenge with traditional GUIDs – their non-sequential nature. Through practical experience with the SyncFramework, where Delta processing order proved problematic due to unpredictable GUID sorting, the need for sortable identifiers became evident. .NET 9’s CreateVersion7() method now generates sequential GUIDs, eliminating the need for custom sequence services. This feature significantly simplifies scenarios requiring reliable ordering, making it a valuable tool for developers working with distributed systems and synchronization frameworks.

Understanding XtraReports: A Windows Forms Developer’s Guide

ransitioning from Windows Forms to XtraReports can be a seamless journey for .NET developers. Leveraging familiar concepts like control containers, event handling, and data binding, XtraReports reimagines Windows Forms for robust reporting needs. My journey began in the early 2000s, evolving from VB6 with Crystal Reports to adopting DevExpress tools. This article explores the architectural parallels, design experience, and best practices to master XtraReports, guiding developers to efficiently design paper-oriented layouts with features like bands, expression bindings, and calculated fields. Discover how your existing expertise can accelerate understanding and productivity in creating professional reports.

Guide to Blazor Component Design and Implementation for backend devs

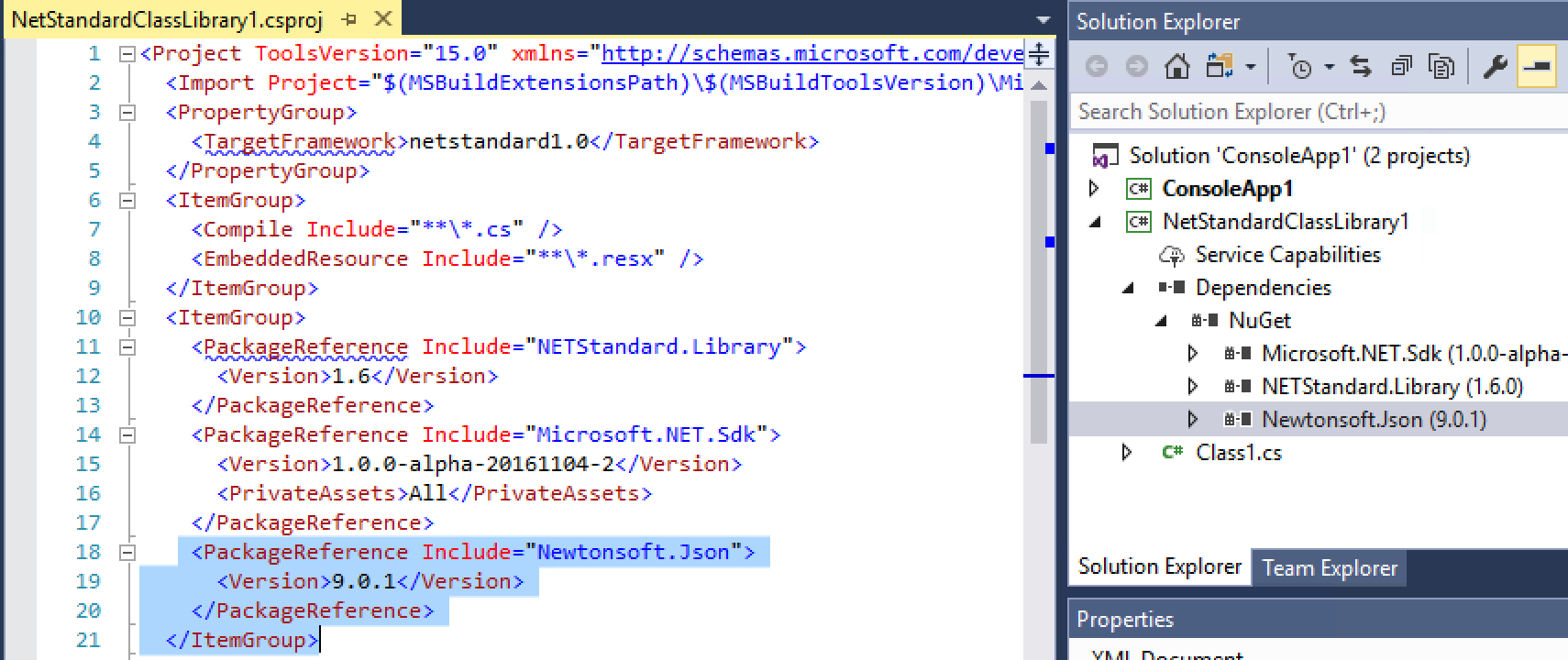

Blazor offers a modern, versatile approach to UI development for .NET developers, bridging the gap between web and desktop applications. As a seasoned .NET developer, I’ve explored platforms like Windows Forms, WPF, Xamarin, and MAUI, but Blazor stands out for its flexibility and broad applicability. From backend-focused frameworks like XAF to crafting custom NuGet libraries, my journey highlights the evolution of .NET development and the growing need for robust, reusable UI components. This guide shares key insights and practical lessons learned while building Blazor components, helping backend developers embrace frontend challenges with effective design patterns and streamlined implementation strategies.

Head Content Injection in .NET 8 Blazor Web Apps

With the release of .NET 8, Blazor introduced a significant change in how developers manage head content injection in web applications. The new unified template replaces the traditional _Host.cshtml approach with App.razor, introducing the HeadOutlet component for head content management. This shift offers two main approaches: adapting existing Tag Helpers to target HeadOutlet, or using a more idiomatic component-based solution with HeadContent. While both methods are viable, the component approach provides better integration with Blazor’s architecture, offering improved render mode support, dynamic content capabilities, and type safety for modern web applications.

Using DevExpress Chat Component and Semantic Kernel ResponseFormat to show a product carousel

On a snowy Saturday, I decided to explore a Blazor project using DevExpress Chat Component and Semantic Kernel. This setup allows us to display product lists as carousels in chat, leveraging AI to dynamically format responses in JSON. Check out how prompt execution settings ensure seamless, adaptable LLM responses

Async Code Execution in XAF Actions

Async execution in XAF can be challenging, especially in keeping the UI responsive. This article covers approaches like using async actions, potential pitfalls, and a solution with an AsyncBackgroundWorker for better UI interaction. Complete code examples are available on GitHub for detailed exploration and implementation

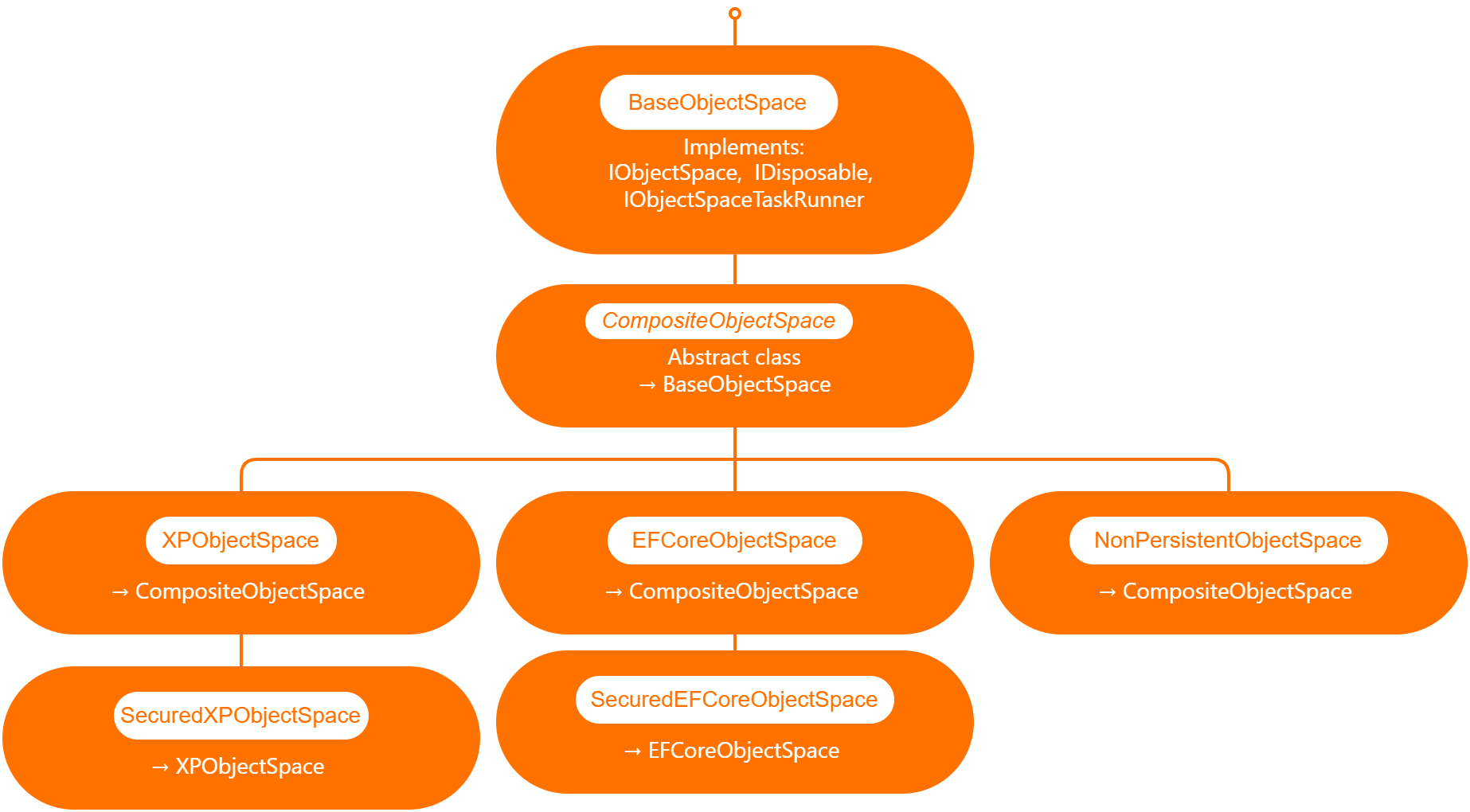

Querying Semantic Memory with XAF and the DevExpress Chat Component

Explore how to integrate DevExpress’s new chat component with XAF and Semantic Kernel. This post walks through creating a custom property editor, using XPO for Semantic Memory storage, and handling message callbacks. Learn how to store and query memories to enhance your AI-driven applications with ease.

Rewriting the XPO Semantic Kernel Memory Store to be Compatible with XAF

This article explores the process of adapting the XPO memory store to work with XAF by rewriting its data layer and making it more flexible for handling embeddings. It introduces the IXpoEntryManager interface to enhance object creation and querying while ensuring compatibility with XAF’s architecture.

AI-Powered XtraReports in XAF: Unlocking DevExpress Enhancements

This blog post explores how to integrate AI-powered enhancements into DevExpress Reporting using Blazor. It walks through the setup process, including NuGet package references and configuring AI behavior for XtraReport. With easy-to-follow steps and code snippets, you’ll learn how to leverage AI features like summarization in your reports effortlessly.

The New Era of Smart Editors: Creating a RAG system using XAF and the new Blazor chat component

This article explores the integration of AI-powered chat functionality into XAF applications using the new DevExpress components. It guides developers through creating a custom property editor, utilizing Retrieval-Augmented Generation (RAG) for enhanced document analysis, and demonstrates how to bridge XAF and Blazor for seamless AI integration in .NET applications.

Integrating DevExpress Chat Component with Semantic Kernel: A Step-by-Step Guide

Integrating DevExpress’s chat component with the Semantic Kernel for AI-powered chat completions is a game-changer. This step-by-step guide shows how to set up an intelligent chat interface using OpenAI and .NET. Learn how to combine these technologies and bring dynamic, context-aware conversations into your applications effortlessly!

Test Driving DevExpress Chat Component

If you’re a Blazor developer looking to integrate AI-powered chat functionality into your applications, the new DevExpress DxAIChat component offers a turnkey solution. It’s designed to make building chat interfaces as easy as possible, with out-of-the-box support for simple chats, virtual assistants, and even Retrieval-Augmented Generation (RAG) scenarios. The best part? You don’t have to […]

Using the IMemoryStore Interface and DevExpress XPO ORM to Implement a Custom Memory Store for Semantic Kernel

This article demonstrates how to implement a custom memory store in Semantic Kernel using the IMemoryStore interface and DevExpress XPO ORM. By leveraging XPO’s database abstraction, you can easily manage AI-driven memory records across multiple databases, ensuring flexibility and scalability for your AI applications in .NET environments.

Leveraging Memory in Semantic Kernel: The Role of Microsoft.SemanticKernel.Memory Namespace

This article explores the **Microsoft.SemanticKernel.Memory Namespace**, focusing on the **VolatileMemoryStore** and how any class implementing **IMemoryStore** can serve as a memory backend for **SemanticTextMemory**. It highlights the flexibility of the memory system in Semantic Kernel for integrating custom memory stores, enhancing AI-driven applications.

Memory Types in Semantic Kernel

Semantic Kernel simplifies integrating AI models into your applications, with chat completions being the most common interaction method. Temporary memory, managed by the ChatHistory object or a string argument, is lost once the host class is disposed. In the next article, we’ll explore long-term memory options for more persistent solutions.

Understanding Shadow Sockets and How They Differ from Traditional VPNs

Shadow Sockets (Shadowsocks) is an open-source encrypted proxy project created to bypass internet censorship, particularly the Great Firewall of China. Unlike traditional VPNs, Shadowsocks uses the SOCKS5 protocol, making it less detectable and more efficient. Developed by “clowwindy” in 2012, Shadowsocks has evolved through community contributions. It offers better performance and lower latency compared to VPNs, making it ideal for high-censorship environments. While VPNs provide comprehensive security and ease of use, Shadowsocks excels in evading detection and maintaining a lightweight connection, making it a valuable tool for accessing unrestricted internet content.

Creating XAF Property Editors in a Unified Way for Windows Forms and Blazor Using WebView

The eXpressApp Framework (XAF) from DevExpress supports multiple UI platforms like Windows Forms and Blazor. This article demonstrates how to create unified property editors for both platforms using WebView and the Monaco Editor. By leveraging these tools, developers can streamline maintenance and ensure a consistent user experience across platforms. Key steps include setting up a new XAF solution, creating a Razor Class Library, integrating it into Windows Forms, and implementing XAF property editors. The result is a versatile application with powerful property editors, enhancing the development process and user interface cohesion.

The Critical Need for AI Legislation in El Salvador: Ensuring Ethical and Innovative Growth

El Salvador is on the brink of a technological revolution with its ambitious plans to integrate Artificial Intelligence (AI) across various sectors. To harness AI’s full potential while mitigating risks, the country urgently needs a comprehensive AI legal framework. This legislation will ensure ethical standards, data protection, innovation incentives, workforce transition programs, and international cooperation. Establishing such a framework will position El Salvador as a leader in AI innovation, driving economic growth and enhancing public services, while safeguarding citizens’ rights and promoting equitable treatment in the digital age.

El Salvador: Digital Transformation Initiatives

El Salvador is undergoing a remarkable digital transformation under President Nayib Bukele, driven by strategic partnerships with tech giants like Google. This includes advancements in healthcare, education, and digital government services. The government has invested significantly in digital infrastructure, expanding internet access and implementing 5G technology. The introduction of the Chivo Wallet has promoted cashless transactions and financial inclusion. Efforts to improve digital literacy are widespread, targeting the general public, students, and government employees. These initiatives aim to position El Salvador as a leader in digital innovation within Latin America.

El Salvador: The Implementation of Bitcoin as Legal Tender

El Salvador made global headlines on June 9, 2021, by becoming the first country to adopt Bitcoin as legal tender. This unprecedented move, spearheaded by President Nayib Bukele, aimed to modernize the economy and increase financial inclusion. The implementation included the launch of the Chivo Wallet and Bitcoin ATMs, along with significant government investments. While the initiative sparked international interest and boosted tourism, it also faced skepticism due to Bitcoin’s volatility. This article explores the economic and social impacts of this bold experiment, highlighting both the opportunities and challenges of integrating cryptocurrency into a national economy.

El Salvador’s Technological Revolution

El Salvador has recently become a focal point for technological innovation under President Nayib Bukele. Born in Suchitoto during the civil war, I have observed this transformation from afar as a digital nomad living in Saint Petersburg, Russia. This article, the first in a series, explores how blockchain technology, financial services, and AI can drive growth in small countries like El Salvador. El Salvador has traditionally faced economic challenges such as poverty, gang violence, and a reliance on remittances. President Bukele’s administration has prioritized technological innovation to address these issues. A significant move was the adoption of Bitcoin as legal tender in 2021, aimed at promoting financial inclusion, reducing remittance costs, and attracting foreign investment. The government has also partnered with tech giants like Google to modernize its digital infrastructure, improve healthcare through telemedicine, and enhance education with AI tools. Bukele’s broader strategy involves shifting the economy from agriculture to technology, financial services, and tourism to create a more resilient and diverse economic base. This series will delve deeper into these initiatives, examining their impact and future prospects, and propose potential AI legislation to ensure continued leadership in technology within Latin America.

Embrace the Dogfood: How Dogfooding Can Transform Your Software Development Process

Dogfooding, or using your own software internally, can transform your development process. By experiencing your product firsthand, you catch bugs early, enhance quality, and improve user experience. Real-world usage provides immediate feedback, speeding up iteration and refining features. Companies like Microsoft, Google, and Slack use this practice to ensure reliability and build credibility. Start by integrating your software into daily tasks, encourage team participation, and set up easy feedback channels. Despite challenges like bias and resource allocation, dogfooding offers invaluable insights, leading to better, more trustworthy software. Embrace dogfooding and create products you truly rely on. Happy coding!

Aristotle’s “Organon” and Object-Oriented Programming

Discover the timeless connection between Aristotle’s “Organon” and Object-Oriented Programming (OOP). Aristotle, the ancient Greek philosopher, revolutionized logical thought with his systematic approach in the “Organon,” a collection of works on categories, propositions, and syllogisms. Fast forward to modern software development, OOP organizes code into classes, objects, and methods, promoting modularity and efficiency. Both systems emphasize structured, logical thinking and error handling. By bridging these ancient and modern principles, we gain a deeper appreciation for the enduring power of logical organization in both philosophical inquiry and technological innovation.

The mystery of lost values: Understanding ASCII vs. UTF-8 in Database Queries

When dealing with databases, understanding character encodings like ASCII and UTF-8 is crucial. ASCII uses 7 bits for each character, allowing 128 unique symbols, while UTF-8 is a variable-width encoding that can represent over a million characters. This difference impacts case-sensitive queries. For example, querying usernames from ‘A’ to ‘z’ includes all uppercase and lowercase letters and some special characters in ASCII. Understanding these ranges ensures accurate and efficient queries.

The Shift Towards Object Identifiers (OIDs):Why Compound Keys in Database Tables Are No Longer Valid

Compound keys in database tables, once essential for normalization, may no longer be ideal. They complicate design and maintenance, especially when tied to business logic. Object identifiers (OIDs) offer a simpler alternative, enhancing schema flexibility and performance. Modern storage solutions reduce the need for strict normalization, allowing a balanced approach. Many ORMs, like XPO from DevExpress, prefer OIDs for easier database interaction and compatibility with object-oriented programming. Simplifying schemas with OIDs can improve maintainability, performance, and decouple business logic, making database systems more robust and efficient.

Getting Started with Stratis Blockchain Development Quest: Running Your First Stratis Node

Stratis is a flexible blockchain platform built on C# and .NET. This guide helps you start developing by running a Stratis node. It covers installing .NET Core SDK, cloning the Stratis Full Node repository, building, and running the node to synchronize with the network, providing a foundation for your blockchain development.

Discovering the Simplicity of C# in Blockchain Development with Stratis

Discover how transitioning from Solidity to Stratis simplified my blockchain development journey. Stratis supports smart contracts using C#, making development more accessible and efficient. Learn about the challenges with Solidity, the benefits of Stratis, and the tools needed to start developing smart contracts in a familiar C# environment.

Solid Nirvana: The Ephemeral State of SOLID Code

Achieving a SOLID state in code is like reaching nirvana — a fleeting moment of perfection. Regularly measuring adherence to SOLID principles using metrics can guide continuous improvement. Embrace these temporary snapshots of perfection to maintain a balanced perspective in the ever-evolving journey of software development.

Choosing the Right JSON Serializer for SyncFramework

Choosing the right JSON serializer for SyncFramework is crucial for performance and data integrity. This article compares DataContractJsonSerializer, Newtonsoft.Json, and System.Text.Json, highlighting their features, use cases, and handling of DataContract requirements to help you make an informed decision for efficient synchronization in .NET applications.

Why I Use Strings as the Return Type in the SyncFramework Server API

Choosing strings as the return type in my SynFramework server API enhances performance, flexibility, and control over data serialization. This approach optimizes data transmission, ensures compatibility with various clients, and simplifies managing data formats, offering a robust solution for C# and Web API developers.

Remote Exception Handling in SyncFramework

Explore exception handling in SyncFramework’s client-server architecture. Learn about throwing exceptions in the API and returning HTTP status codes. Discover best practices for handling exceptions server-side, interpreting HTTP status codes, and crafting error messages without exposing sensitive information. Create robust, user-friendly applications with effective exception handling

To be, or not to be: Writing Reusable Tests for SyncFramework Interfaces in C#

Ensuring robust database synchronization, SyncFramework’s interfaces like IDeltaStore require thorough testing. By creating reusable base test classes and implementing concrete test classes, you ensure consistent behavior across all implementations. Automate these tests in your CI/CD pipeline for reliable, maintainable, and interchangeable components within your framework.

Breaking Solid: Challenges of Adding New Functionality to the Sync Framework

Introducing new functionality to a sync framework often challenges adherence to SOLID design principles. Each principle, from Single Responsibility to Dependency Inversion, presents specific dilemmas. For instance, adding new features can violate the Open/Closed Principle by necessitating modifications to existing code. Developers must strategically decide when to introduce breaking changes, considering factors like impact assessment, semantic versioning, and stakeholder engagement. Balancing innovation with design integrity requires thoughtful planning and robust testing practices. Ultimately, evolving the framework to meet user needs may sometimes mean bending established principles for greater functionality and efficiency.

Extending Interfaces in the Sync Framework: Best Practices and Trade-offs

In modern software development, extending interfaces like IDeltaStore and IDeltaProcessor in the Sync Framework to add events such as SavingDelta, SavedDelta, ProcessingDelta, and ProcessedDelta can enhance functionality but poses challenges. Extending existing interfaces is simpler but can break existing implementations and violate SOLID principles. Alternatively, adding new interfaces preserves backward compatibility and adheres to best practices, though it may introduce complexity and redundancy. Balancing these trade-offs is crucial. For maintaining backward compatibility and adhering to SOLID principles, adding new interfaces is preferred. However, extending interfaces might be viable in controlled environments with manageable updates.

Unlocking the Power of Augmented Data Models: Enhance Analytics and AI Integration for Better Insights

Discover the power of augmented data models, which extend traditional data models by integrating diverse data sources, advanced analytics, AI-driven embeddings, and enhanced data features. These models offer comprehensive insights, improved decision-making, and innovative capabilities, transforming how organizations leverage data for strategic advantage and operational efficiency.

The Transition from x86 to x64 in Windows: A Detailed Overview

he transition from x86 (32-bit) to x64 (64-bit) architecture in Windows marked a significant leap in computing capabilities. Introduced in the early 2000s, this shift allowed for better performance, enhanced security, and the ability to utilize more memory. To manage compatibility, Windows implemented separate directories for 32-bit (Program Files (x86)) and 64-bit (Program Files) applications. This change brought some confusion, particularly regarding program file structures and compatibility issues. Understanding these distinctions is crucial for optimizing system performance and navigating the modern computing landscape effectively.

Understanding CPU Translation Layers: ARM to x86/x64 for Windows, macOS, and Linux

As technology evolves, running software across different CPU architectures has become crucial. CPU translation layers enable ARM-compiled applications to run on x86/x64 platforms seamlessly. Windows uses WOW and x86/x64 emulation, macOS employs Rosetta 2, and Linux leverages QEMU for this purpose. These layers translate instructions dynamically, often using Just-In-Time (JIT) compilation and caching techniques to optimize performance. Developers are encouraged to compile for multiple architectures and thoroughly test their applications. CPU translation layers ensure compatibility and smooth interoperability, bridging the gap between ARM and x86/x64 across Windows, macOS, and Linux.

How ARM, x86, and Itanium Architectures Affect .NET Developers

Understanding how ARM, x86, and Itanium architectures impact .NET development is crucial for optimizing performance and ensuring compatibility. ARM’s energy efficiency benefits mobile devices, while x86 excels in high-performance tasks. Itanium, designed for high-end computing, requires unique optimization strategies. .NET supports various architectures, including x86, ARM, and limited Itanium. Developers must use appropriate NuGet packages—either architecture-agnostic or specific—to maintain performance and compatibility. By leveraging cross-platform capabilities, using the right packages, and thorough testing, developers can create efficient applications for diverse devices. This article explores these architectures’ effects on .NET development, highlighting essential strategies and tools.

Understanding CPU Architectures: ARM vs. x86

Explore the key differences between ARM and x86 CPU architectures. ARM, known for its power efficiency and scalability, dominates mobile and embedded markets. In contrast, x86 excels in high-performance computing for desktops and servers, with a rich software ecosystem. Discover why x86 remains essential for running legacy and specialized software, particularly in high-end gaming and professional applications.

A New Era of Computing: AI-Powered Devices Over Form Factor Innovations

of AI with its Neural Processing Unit (NPU). This shift emphasizes enhancing existing devices with AI over creating new form factors. The Surface’s NPU enables real-time local processing, boosting productivity, personalization, and security by reducing reliance on cloud services. Unlike devices like the Humane Pin and Rabbit AI, which depend heavily on cloud connectivity, the Surface’s local AI processing offers faster and more secure performance. This innovation sets a new standard for future tech advancements.

Comparing OpenAI’s ChatGPT and Microsoft’s Copilot mobile apps

OpenAI’s ChatGPT and Microsoft’s Copilot are two powerful AI tools that have revolutionized the way we interact with technology. While both are designed to assist users in various tasks, they each have unique features that set them apart. OpenAI’s ChatGPT ChatGPT, developed by OpenAI, is a large language model chatbot capable of communicating with users […]

Embracing the WSL: A DotNet Developer’s Perspective

Exploring WSL for .NET Developers: Discover the benefits of Windows Subsystem for Linux (WSL) for .NET development. Enhance your workflow with Linux’s powerful features while leveraging the robust .NET ecosystem. Dive into a seamless integration that boosts productivity and broadens your development capabilities

New version of SyncFramework for Efcore 8.0.0.X

I’m happy to announce the new version of SyncFramework for EfCore, targeting net8.0 and referencing EfCore 8 nugets as follow EfCore Postgres Version: 8.0.4 EfCore PomeloMysql Version: 8.0.2 EfCore Sqlite Version: 8.0.5 EfCore SqlServer Version: 8.0.5 You can download the new versions from Nuget.org and check the repo here Happy synchronization everyone!!!!

Design Patterns for Library Creators in Dotnet

Explore the world of design patterns with a focus on SOLID principles in .NET library creation. Using the SyncFramework as an example, we delve into how these principles lead to more understandable, flexible, and maintainable software. Stay tuned for real-world examples in our upcoming series.

Semantic Kernel Connectors and Plugins

Welcome to the fascinating world of artificial intelligence (AI)! You’ve probably heard about AI’s incredible potential to transform our lives, from smart assistants in our homes to self-driving cars. But have you ever wondered how all these intelligent systems communicate and work together? That’s where something called “Semantic Kernel Connectors” comes in. Imagine you’re organizing […]

Semantic Kernel: Your Friendly AI Sidekick for Unleashing Creativity

Explore the power of AI with Microsoft’s Semantic Kernel.

Unlocking the Magic of IPFS Gateways: Your Bridge to a Decentralized Web

IPFS gateways serve as cosmic bridges, connecting our familiar HTTP web to the decentralized wonderland of IPFS. Imagine fetching content from the stars and translating it into earthly language—these gateways do just that. Whether you’re sharing interstellar recipes or marveling at celestial cat memes, IPFS gateways make the cosmos accessible to all.

Mastering Symbolic Links: Unleashing the Power of Symlinks for Efficient File Management

Explore the concept of symbolic links, or symlinks, in file systems. Learn about soft and hard links, their uses, and how to create them in Windows. Discover how symlinks can manage storage by moving large files, like LLM files, to an external drive while maintaining accessibility.

A Beginner’s Guide to System.Security.SecurityRules and SecuritySafeCritical in C#

Explore the critical security attributes in .NET Framework – `System.Security.SecurityRules` and `SecuritySafeCritical`. Understand their roles, relationship, and usage in enforcing Code Access Security. Learn about trusted code and see practical examples of these attributes in action. Remember, with great power comes great responsibility!

An Introduction to Dynamic Proxies and Their Application in ORM Libraries with Castle.Core

This article provides an introduction to Castle.Core and dynamic proxies, focusing on their application in Object-Relational Mapping (ORM) Libraries. It explains how Castle.Core, a popular open-source project, offers common abstractions and has been downloaded over 88 million times. The article simplifies the concept of dynamic proxies and highlights their significance in intercepting method calls and implementing aspect-oriented programming. It also presents a simple example of creating a dynamic proxy using Castle.Core. The article concludes by emphasizing the utility of Castle.Core and dynamic proxies in enhancing programming capabilities, especially in ORM libraries.

Understanding Non-Fungible Tokens (NFTs)

This article provides an in-depth understanding of Non-Fungible Tokens (NFTs), their representation, the role of smart contracts in minting NFTs, and the difference between fungible and non-fungible tokens. It also highlights the use of OpenZeppelin contracts in the NFT space.

Finding Out the Invoking Methods in .NET

Finding Out the Invoking Methods in .NET In .NET, it’s possible to find out the methods that are invoking a specific method. This can be particularly useful when you don’t have the source code available. One way to achieve this is by throwing an exception and examining the call stack. Here’s how you can do […]

Blockchain in Healthcare: A Revolution in Medical Records Management

Blockchain technology, particularly Ethereum-like blockchains, can revolutionize the healthcare industry by providing a secure and organized exchange of data within the medical community. This technology offers a secure, decentralized, and transparent platform that can address many of the pressing issues in healthcare, such as fragmented and siloed records, and difficult access to patients’ own health information.

Using Blockchain for Carbon Credit Sales

Blockchain and Carbon Credits Innovative Approach: The article discusses the utilization of blockchain technology to facilitate the sale of carbon credits. Technical Insights: It provides an overview of the process and the benefits of using blockchain for such transactions. Practical Examples: The content includes real-world applications and case studies to illustrate the concept. Expert Analysis: The piece offers expert opinions and analysis on the potential impact of blockchain on the carbon credit market.

Understanding OpenVPN and DD-WRT

In today’s digital age, ensuring the security of our online activities and expanding the capabilities of our home networks are more important than ever. Two powerful tools that can help you achieve these goals are OpenVPN and DD-WRT. Here’s a straightforward guide to understanding what these technologies are and how they can be beneficial. What […]

Understanding Ethereum, Smart Contracts, and Blockchain Comparisons

The Ethereum Virtual Machine (EVM) acts as a decentralized global computer, made up of thousands of individual computers around the world. This network supports smart contracts—self-executing contracts with the terms directly written into code, eliminating the need for intermediaries. Smart contracts enable automated, secure, and efficient transactions, such as automatic rent payments from a tenant to a landlord. Beyond Ethereum, other blockchains like Solana, Polygon, and TON (The Open Network) also support smart contracts, each offering distinct advantages. Solana is renowned for its exceptional processing speed and low transaction costs, making it highly scalable and cost-effective for operations. Polygon enhances Ethereum by providing faster and cheaper transaction capabilities on its side-chain, significantly improving the transaction processing time and reducing costs. TON is designed to be fast and efficient, supports a variety of features including decentralized storage, and aims to make blockchain technology accessible to the mainstream market. These blockchains enhance user experience through faster transactions, lower costs, and high scalability. They also ensure security and reliability due to their decentralized nature. Each platform caters to different needs, allowing developers to select the most suitable blockchain based on their specific requirements for efficiency and functionality. The choice of blockchain can profoundly impact the efficiency, cost, and scalability of applications, making it crucial for developers to understand the unique features and benefits of each.

Navigating the Challenges of Event-Based Systems

This article explores the challenges of event-based systems, including debugging complexities, ensuring event ordering, and managing data consistency. It highlights key issues such as latency, throughput, and security concerns, offering insights into overcoming these hurdles for building robust, scalable, and efficient event-driven architectures, ensuring successful implementation and operation.

Understanding AppDomains in .NET Framework and .NET 5 to 8

In the .NET Framework, AppDomains provided a secure, isolated environment for applications to run within a single process, enabling dynamic assembly loading and unloading without impacting the entire application. With the advent of .NET 5 to 8, the focus shifted towards cross-platform compatibility and microservices, leading to the deprecation of AppDomains in favor of AssemblyLoadContext for assembly management and containers for application isolation. This transition reflects modern development practices, emphasizing performance, scalability, and compatibility across different platforms. Understanding these changes is crucial for developers migrating from the .NET Framework to newer .NET versions, as it affects application structure and deployment.

Carbon Sequestration: A Vital Process for Climate Change Mitigation

Carbon sequestration is essential for mitigating climate change by capturing atmospheric CO2. It involves biological and geological methods to store carbon in vegetation, soils, oceans, and underground formations. While promising, it requires careful monitoring due to potential side effects like leakage and seismic events triggered by CO2 injection.

Understanding Carbon Credit Allowances

Carbon credit allowances play a crucial role in combating climate change through a cap-and-trade system that limits greenhouse gas emissions and permits trading of emission units. Entities like the California Air Resources Board, Regional Greenhouse Gas Initiative, and Quebec’s Cap-and-Trade System issue these allowances, supporting a significant carbon market in North America. Alongside, voluntary standards such as Verra and the Gold Standard certify projects for carbon credits, contributing to global efforts against climate change. Understanding and participating in these systems allows businesses and individuals to actively contribute to reducing carbon footprints and advancing towards a more sustainable future.

Good News for Copilot Users: Generative AI for All!

In the latest tech update, Microsoft Copilot undergoes a transformative expansion, introducing Copilot Pro for individual users and enhancing accessibility for businesses of all sizes. This marks a significant step in democratizing generative AI technology. Key developments include the general availability of Copilot for Microsoft 365 for small and medium businesses, the removal of the 300-seat minimum for commercial plans, and eligibility expansion to Office 365 E3 and E5 customers. These changes promise to revolutionize work dynamics across diverse sectors. Microsoft’s vision of integrating AI into everyday work and personal life takes a leap forward with Copilot’s evolving capabilities.

Carbon Credits 101

Carbon credits control CO2 emissions through a market mechanism, incentivizing companies to reduce their carbon footprint and invest in cleaner technologies, ultimately balancing economic growth with environmental responsibility.

SQLite and Its Journal Modes

SQLite, a popular lightweight database, offers various journal modes to manage transactions and ensure data integrity. These modes include Delete, the default mode creating a rollback file; Truncate, which speeds up transactions by truncating this file; Persist, reducing file operations by leaving the journal file inactive; Memory, for high-speed transactions using RAM; Write-Ahead Logging (WAL), enhancing concurrency and data durability; and Off, for maximum speed where data integrity is not a priority. Understanding these modes allows for optimized database performance, balancing between speed, resource usage, and data consistency, making SQLite versatile for a range of applications.

User-Defined Functions in SQLite: Enhancing SQL with Custom C# Procedures

SQLite enhances SQL by allowing the integration of user-defined functions within applications, enabling developers to extend database functionalities using their app’s programming language. Key features include scalar functions, which return a single value per row, and aggregate functions that consolidate data from multiple rows. Developers can define or override these functions using CreateFunction and CreateAggregate methods, respectively. Custom operators like glob, like, and regexp can also be defined, altering standard SQL operator behaviors. SQLite’s design ensures efficient error handling and supports full .NET debugging, streamlining the development of robust and efficient SQL custom functions.

LangChain

In the dynamic field of artificial intelligence, LangChain emerges as a pivotal framework, revolutionizing the use of large language models like GPT-3. Developed by Shawn Presser, LangChain is designed for the easy integration and application of these models in various computational tasks. This open-source framework marks a significant stride in AI and NLP, offering a modular and scalable platform for developers. Its historical roots trace back to the advent of advanced language models, addressing the need for practical application tools. LangChain finds diverse applications in areas such as customer service, content creation, and data analysis, enhancing efficiency and creativity. Its role in democratizing AI technology highlights its potential for future innovations. LangChain is not just a software framework; it’s a key player in the ongoing narrative of AI’s impact across different sectors, promising a future rich in AI-driven advancements.

Run A.I models locally with Ollama

In this insightful article, we delve into the dynamic world of the Ollama AI framework, a cutting-edge platform that runs large language models (LLMs) directly on your local machine. We explore a diverse range of models available in Ollama, each tailored to specific computational needs and applications. From the versatile Llama 2 to the coding-focused Code Llama, and the powerful Llama 2 with 70 billion parameters, this piece provides a comprehensive overview of the models you can utilize for your projects. Whether you’re a developer, a data scientist, or an AI enthusiast, this article is your guide to understanding and harnessing the power of Ollama’s extensive model library.

Understanding LLM Limitations and the Advantages of RAG

Exploring the intricacies of artificial intelligence, this article sheds light on the limitations of Large Language Models (LLMs), particularly focusing on issues like outdated information and lack of data source attribution. It juxtaposes these challenges with the innovative approach of Retrieval-Augmented Generation (RAG), which integrates real-time data retrieval with generative models, offering a more dynamic, credible, and transparent solution in the AI landscape. By highlighting the advantages of RAG over traditional fine-tuning methods in LLMs, the article underscores the importance of continuous evolution in AI technologies for enhanced reliability and accuracy in various applications.

Enhancing AI Language Models with Retrieval-Augmented Generation

Exploring Retrieval-Augmented Generation (RAG), this article delves into how it revolutionizes AI language models. RAG merges language generation with external data retrieval, enhancing response accuracy and relevance in various sectors like customer service, education, healthcare, and media.

The Steps to Create, Train, Save, and Load a Spam Detection AI Model Using ML.NET

not only demonstrates the process of creating, training, saving, and loading a spam detection AI model using ML.NET, but also emphasizes the reusability of the trained model. By following the steps in the article, you will be able to create a model that can be easily reused and integrated into your .NET applications, allowing you to effectively identify and filter out spam emails.

The Meme: A Cultural A.I Embedding

In the fascinating intersection of AI and culture, embeddings in artificial intelligence and memes share a surprising similarity. Both are methods of abstraction and distillation: AI embeddings transform complex data into lower-dimensional, meaningful forms, while memes encapsulate collective human experiences into universally relatable images and texts. This comparison not only sheds light on the nuanced capabilities of AI but also emphasizes the cultural significance of memes, offering profound insights into the evolving relationship between technology and human expression.

ONNX: Revolutionizing Interoperability in Machine Learning

ONNX: Revolutionizing Interoperability in Machine Learning The field of machine learning (ML) and artificial intelligence (AI) has witnessed a groundbreaking innovation in the form of ONNX (Open Neural Network Exchange). This open-source model format is redefining the norms of model sharing and interoperability across various ML frameworks. In this article, we explore the ONNX […]

ML vs BERT vs GPT: Understanding Different AI Model Paradigms

In the dynamic world of artificial intelligence (AI) and machine learning (ML), diverse models such as ML.NET, BERT, and GPT each play a pivotal role in shaping the landscape of technological advancements. This article embarks on an exploratory journey to compare and contrast these three distinct AI paradigms. Our goal is to provide clarity and […]

ML Model Formats and File Extensions

Machine Learning Model Formats and File Extensions The realm of machine learning (ML) and artificial intelligence (AI) is marked by an array of model formats, each serving distinct purposes and ecosystems. The choice of a model format is a pivotal decision that can influence the development, deployment, and sharing of ML models. In this article, […]

Machine Learning and AI: Embeddings

In the realms of machine learning (ML) and artificial intelligence (AI), embeddings play a crucial role. They transform complex, high-dimensional data into more manageable low-dimensional vectors, preserving essential properties. Embeddings are particularly vital in natural language processing (NLP), enabling ML models to effectively interpret text. Their creation involves sophisticated models like Word2Vec and CNNs, trained on extensive data to capture nuanced features. This article delves into the fundamentals of embeddings, underscoring their significance in advancing AI technologies

Understanding Machine Learning Models

Key Insights into Machine Learning Models Discover the fundamentals of machine learning models: their types, differences, and usage. Learn about algorithms that transform input data into insightful predictions and decisions. Explore the diversity of models, from linear regression to neural networks, and understand their unique learning styles and tasks. Grasp the essential steps of selecting, training, and deploying these models, supported by tools like scikit-learn, TensorFlow, and PyTorch. This guide serves as a concise introduction to harnessing the power of machine learning in data-driven decision making.

Support Vector Machines (SVM) in AI and ML

Understanding Support Vector Machines in AI and ML This article delves into the world of Support Vector Machines (SVM), a pivotal supervised learning technique in artificial intelligence (AI) and machine learning (ML). Originating from the groundbreaking work of Vladimir Vapnik and Alexey Chervonenkis in the 1960s, SVMs have evolved to become key tools in handling classification and regression tasks, especially in scenarios involving high-dimensional data. The article provides insights into the historical development of SVMs, including the introduction of the soft margin concept and the revolutionary kernel trick. It also explores various applications of SVMs, ranging from bioinformatics to financial analysis, highlighting their versatility and effectiveness. In conclusion, the article underscores the enduring relevance of SVMs in the rapidly evolving field of AI and ML, noting their unique strengths in model interpretability and efficiency with smaller datasets. As we continue to witness advancements in technology, SVMs remain a vital component in the data scientist’s toolkit.

Introduction to Machine Learning in C#: Spam Detection using Binary Classification

Discover the fascinating world of machine learning in C# using the ML.NET framework in this insightful article. From defining data models to implementing a spam detection system, the article provides a step-by-step guide for integrating AI technologies into .NET applications. It delves into the practicalities of setting up NUnit test projects in Visual Studio Code, enhancing the learning experience with real-world examples. The piece concludes with reflections on the potential of machine learning to revolutionize software development and a glimpse into the future of AI applications. This article is an essential read for anyone interested in the intersection of programming, technology education, and the ever-evolving landscape of artificial intelligence.

Understanding Neural Networks

Exploring the Fascinating World of Neural Networks In the ever-evolving realm of technology, neural networks stand at the forefront of innovation, driving the future of artificial intelligence (AI). These complex systems, mirroring the intricate structure of the human brain, are revolutionizing the way machines learn, process data, and make decisions. From the pioneering efforts of Warren McCulloch and Walter Pitts to the game-changing backpropagation algorithm, the journey of neural networks is a testament to human ingenuity and the relentless pursuit of knowledge. In this article, we delve into the fundamentals of neural networks, tracing their historical roots and exploring their role in modern AI. We uncover how these networks, composed of layers of interconnected nodes or neurons, analyze vast amounts of data to identify patterns and make predictions. Through a simple example of email classification, we demonstrate how neural networks can distinguish between ‘spam’ and ‘not spam,’ showcasing their practical applications in everyday life. Join us as we navigate the intricate pathways of neural networks, unveiling the mysteries of machine learning and opening doors to a future where AI shapes the fabric of our reality.

Decision Trees and Naive Bayes Classifiers

Decision Trees and Naive Bayes Classifiers Decision Trees Overview: Decision trees are a type of supervised learning algorithm used for classification and regression tasks. They work by breaking down a dataset into smaller subsets while at the same time developing an associated decision tree incrementally. The final model is a tree with decision nodes and […]

Machine Learning: History, Concepts, and Application